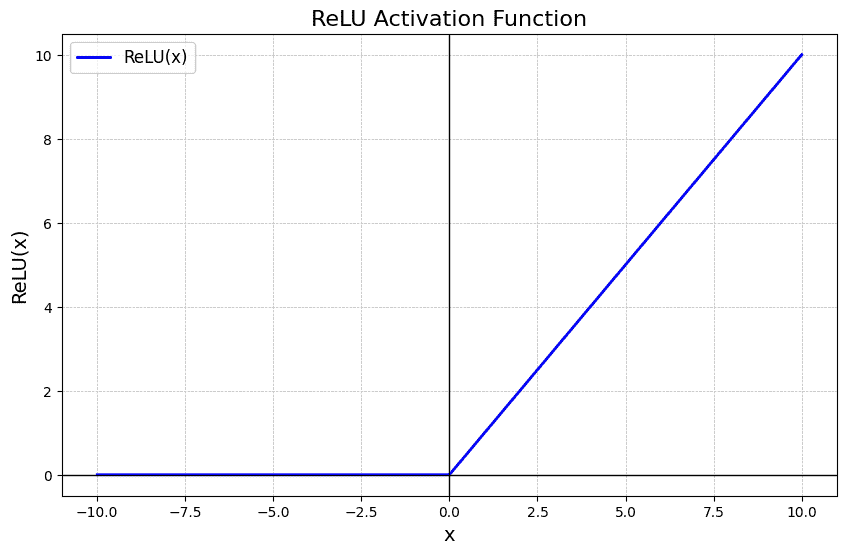

ReLU is a widely used activation function in deep learning that outputs the input directly if it is positive and returns zero otherwise. Its simplicity and efficiency make it a default choice in many neural network architectures, helping models learn complex patterns while reducing issues like the vanishing gradient problem.

- Allows positive values to pass unchanged and sets negative values to zero.

- Simple and computationally efficient activation function.

- Helps maintain non-linearity in neural networks.

- Reduces the vanishing gradient problem compared to older functions.

Mathematical Form

The ReLU function can be described mathematically as follows:

Where:

- x is the input to the neuron.

- The function returns x if x is greater than 0.

- If x is less than or equal to 0, the function returns 0.

The formula can also be written as:

Why is ReLU Popular?

- Simplicity: ReLU is computationally efficient as it involves only a thresholding operation. This simplicity makes it easy to implement and compute, which is important when training deep neural networks with millions of parameters.

- Non-Linearity: Although it seems like a piecewise linear function, ReLU is still a non-linear function. This allows the model to learn more complex data patterns and model intricate relationships between features.

Q: Why did the ReLU activation function break up with its partner?

Answer: Because it just couldn’t handle the negative energy!

- Sparse Activation: ReLU's ability to output zero for negative inputs introduces sparsity in the network, meaning that only a fraction of neurons activate at any given time. This can lead to more efficient and faster computation.

- Gradient Computation: ReLU offers computational advantages in terms of backpropagation, as its derivative is simple—either 0 (when the input is negative) or 1 (when the input is positive). This helps to avoid the vanishing gradient problem, which is a common issue with sigmoid or tanh activation functions.

ReLU vs. Other Activation Functions

Activation Function | Formula | Output Range | Advantages | Disadvantages | Use Case |

|---|---|---|---|---|---|

ReLU |

|

| Hidden layers in deep networks | ||

Leaky ReLU |

| Requires manual tuning of | Hidden layers (ReLU alternative) | ||

PReLU | Same as Leaky ReLU (learns |

|

| Deep networks where ReLU fails | |

Sigmoid | (0, 1) |

|

| Output layer (binary classification) | |

Tanh | (-1, 1) |

| Vanishing gradient | Hidden layers (normalized data) | |

ELU |

| Slower computation than ReLU | Deep networks | ||

Softmax | f(x_i) = \frac{e^{x_i}}{\sum_{j} e^{x_j}} | (0, 1) (for each class) |

|

| Output layer (multiclass classification) |

Drawbacks of ReLU

While ReLU has many advantages, it also comes with its own set of challenges:

- Dying ReLU Problem: One of the most significant drawbacks of ReLU is the "dying ReLU" problem, where neurons can sometimes become inactive and only output 0. This happens when large negative inputs result in zero gradient, leading to neurons that never activate and cannot learn further.

- Unbounded Output: Unlike other activation functions like sigmoid or tanh, the ReLU activation is unbounded on the positive side, which can sometimes result in exploding gradients when training deep networks.

- Noisy Gradients: The gradient of ReLU can be unstable during training, especially when weights are not properly initialized. In some cases, this can slow down learning or lead to poor performance.

Variants of ReLU

To mitigate some of the problems associated with the ReLU function, several variants have been introduced:

1. Leaky ReLU

Leaky ReLU introduces a small slope for negative values instead of outputting zero, which helps keep neurons from "dying."

where

2. Parametric ReLU

Parametric ReLU (PReLU) is an extension of Leaky ReLU, where the slope of the negative part is learned during training. The formula is as follows:

Where:

x is the input.\alpha is the learned parameter that controls the slope for negative inputs. Unlike Leaky ReLU, where\alpha is a fixed value (e.g., 0.01), PReLU learns the value of α\alphaα during training.

In PReLU,

3. Exponential Linear Unit (ELU)

Exponential Linear Unit (ELU) adds smoothness by introducing a non-zero slope for negative values, which reduces the bias shift. It’s known for faster convergence in some models.

The formula for Exponential Linear Unit (ELU) is:

Where:

x is the input.\alpha is a positive constant that defines the value for negative inputs (often set to 1).- For

x \geq 0 , the output is simply x (same as ReLU). - For

x < 0 , the output is an exponential function of x, shifted by 1 and scaled by\alpha .

When to Use ReLU?

- Handling Sparse Data: ReLU helps with sparse data by zeroing out negative values, promoting sparsity and reducing overfitting.

- Faster Convergence: ReLU accelerates training by preventing saturation for positive inputs, enhancing gradient flow in deep networks.

But, in cases where your model suffers from the "dying ReLU" problem or unstable gradients, trying alternative functions like Leaky ReLU, PReLU, or ELU could yield better results.

ReLU Activation in PyTorch

The following code defines a simple neural network in PyTorch with two fully connected layers, applying the ReLU activation function between them, and processes a batch of 32 input samples with 784 features, returning an output of shape [32, 10].

import torch

import torch.nn as nn

class SimpleNeuralNetwork(nn.Module):

def __init__(self):

super(SimpleNeuralNetwork, self).__init__()

self.fc1 = nn.Linear(784, 128)

self.relu = nn.ReLU()

self.fc2 = nn.Linear(128, 10)

def forward(self, x):

x = self.fc1(x)

x = self.relu(x)

x = self.fc2(x)

return x

model = SimpleNeuralNetwork()

input_tensor = torch.randn(32, 784)

output = model(input_tensor)

print(output.shape)

Output:

torch.Size([32, 10])The ReLU activation function has revolutionized deep learning models, helping networks converge faster and perform better in practice. While it has some limitations, its simplicity, sparsity, and ability to handle the vanishing gradient problem make it a powerful tool for building efficient neural networks. Understanding ReLU’s strengths and limitations, as well as its variants, will help you design better deep learning models tailored to your specific needs.