Bayesian Additive Regression Trees (BART), proposed by Chipman et al. (2010) (Reference 1), is a Bayesian “sum-of-trees” model where we use the sum of trees to model or approximate the target function , where

is the response of interest and

represents the covariates that we are using to predict

. (If there are

covariates, then

is a

-dimensional vector.) It is inspired by ensemble methods such as boosting, where a meta algorithm combines a large number of “weak learners”/”weak predictors” to get a much more accurate prediction.

As a Bayesian method, all we have to do is to specify the prior distribution for the parameters and the likelihood. From there, we can turn the proverbial Bayesian crank to get the posterior distribution of parameters and with it, posterior inference of any quantity we are interested in (e.g. point and estimate estimates of ).

Likelihood

The likelihood is easy to specify once we get definitions out of the way. Let denote a binary decision tree. Assuming the tree has

terminal nodes, let

be the set of parameter values associated with each of the terminal nodes such that if the

value ends up in the

th terminal node, the tree would return the value

. We can think of

as representing the structure of the tree, and of

as specifying the value we return to the user once the input hits one of the terminal nodes.

For a given and

, let

denote the function which assigns

to

if

ends up in the

th terminal node.

The likelihood for BART is

The response is the sum of binary decision trees with additive Gaussian noise.

Prior specification: General comments

We need to choose priors for ,

, and

.

Chipman et al. actually decided not to put a prior on for computational reasons. Instead, they suggested beginning with a default of

, then check if one or two other choices of

makes any difference. According to them, as

increases, predictive performance improves dramatically at first, then levels off, and finally degrades slowly. (I believe this is the observed behavior with boosting as well.) As such, for prediction it appears only important to avoid having too few trees.

As for the other parameters, we introduce independence assumptions to simplify the prior specification. If represents the terminal value of the

th node in

, then we assume that

and that

To complete the prior specification, we just need to specify the priors for ,

and

. We do so in the following subsections. The paper notes that these priors were also used in an earlier paper by the same authors (Reference 2) where they considered a Bayesian model for a single decision tree.

The prior

For BART uses a standard conjugate prior, the inverse chi-square distribution

where and

are the degrees of freedom and scale hyperparameters. Chipman et al. recommend choosing these two hyperparameters in a data-driven way:

- Get some estimate

of

(e.g. sample standard deviation of

, or fit ordinary least squares (OLS) of

on

and take the residual standard deviation).

- Pick a value of

between 3 and 10 to get an appropriate shape.

- Pick a value of

so that the

th quantile of the prior on

is

, i.e.

. Chipman et al. recommend considering

,

or

.

For users who don’t want to choose and

, the authors recommend defaults of

and

.

The prior

The prior for a tree can be specified with 3 ingredients:

- The probability that a node at depth

(the root node having depth 0) is non-terminal.

- If a node is non-terminal, the probability that the

th covariate (out of

covariates) is the splitting variable for this node.

- Once the splitting variable for a node has been chosen, a probability over the possible cut-points for this variable.

Chipman et al. suggest the following:

, where

and

are hyperparameters. Authors suggest

and

to favor small trees. With this choice, trees with 1, 2, 3, 4 and

terminal nodes have prior probability of 0.05, 0.55, 0.28, 0.09 and 0.03 respectively.

- Chipman et al. suggest the uniform distribution over the

covariates.

- Given the splitting variable, Chipman et al. suggest the uniform prior on the discrete set of available splitting values for this variable.

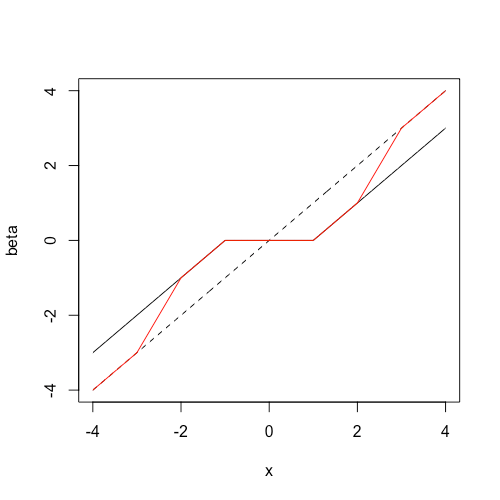

The prior

For computational efficiency, Chipman et al. suggest using the conjugate normal distribution

where and

are hyperparameters. As with the hyperparameters associated with the

prior, the authors suggest setting them in a data-driven way. The way we do this is by ensuring that

is in the right ballpark. With the prior above,

has the prior

. Letting

and

be the min and max observed values of

, choose

and

such that

If we choose , then this choice of hyperparameters means that the prior probability that

is 0.95.

Other notes

In theory, once we define a prior and likelihood we have a posterior. The practical question is whether we can derive this posterior or sample from it efficiently. Section 3 of the paper outlines a Bayesian backfitting MCMC algorithm that allows us to sample from the posterior distribution.

The set-up above applies for quantitative . For binary

, the paper develops a probit model along similar lines in Section 4.

References:

- Chipman, H. A., George, E. I., and McCulloch, R. E. (2010). BART: Bayesian additive regression trees.

- Chipman, H. A., George, E. I., and McCulloch, R. E. (1998). Bayesian CART model search.