Adam (short for adaptive moment estimation) was proposed by Kingma & Ba (2014) (Reference 1) as a first-order gradient-based optimization method that combines the advantages of AdaGrad (Duchi et al. 2011, Reference 2) (ability to deal with sparse gradients) and RMSProp (Tieleman & Hinton 2012, Reference 3) (ability to deal with non-stationary objectives). While considered an old method by now, it’s still widely used as a baseline algorithm to compare more cutting-edge algorithms against.

According to the authors,

“Some of Adam’s advantages are that the magnitudes of parameter updates are invariant to rescaling of the gradient, its stepsizes are approximately bounded by the stepsize hyperparameter, it does not require a stationary objective, it works with sparse gradients, and it naturally performs a form of step size annealing.”

Notation

is a noise objective function that is differentiable w.r.t. to its parameter

. We want to minimize

.

are stochastic realizations of

at timesteps

.

- Let

denote the gradient of

w.r.t.

.

Adam

At each timestep , Adam keeps track of

, an estimate of the first moment of the gradient

, and

, an estimate of the second raw moment (uncentered variance) of the gradient

(

represents element-wise multiplication). The Adam update is

where and

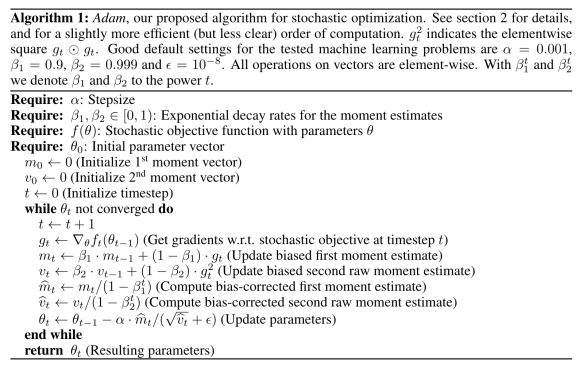

are hyperparameters. The full description of Adam is in the Algorithm below:

Comparison with other methods

In comparison with Adam, the vanilla SGD update is

and the classical momentum-based SGD update is

The basic version of AdaGrad has update rule

AdaGrad corresponds to Adam with , infinitesimal

, and replacing

with

(see Section 5 of Reference 1 for a few more details).

AdamW

AdamW, proposed by Loshchilov & Hutter 2019 (Reference 4), is a simple modification of Adam which “improves regularization in Adam by decoupling the weight decay from the gradient-based update”.

The original weight decay update was of the form

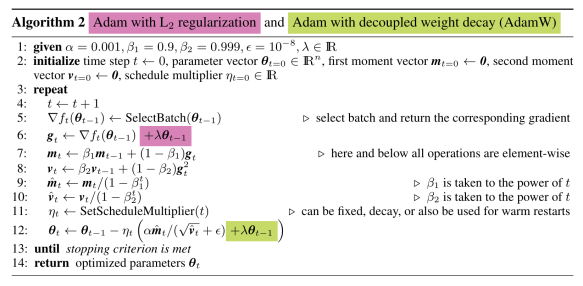

where defines the rate of weight decay per step. Loshchilov & Hutter (2019) note that for standard SGD, this weight decay is equivalent to L2 regularization (Proposition 1 of Reference 4), but the two are not equivalent for the Adam update. They present Adam with L2 regularization and Adam with decoupled weight decay (AdamW) together in Algorithm 2 of the paper:

Overall, the authors found that AdamW performed better than Adam with L2 regularization.

PyTorch’s implementation of AdamW can be found here.

References:

- Kingma, D. P., and Ba, J. (2014). Adam: A Method for Stochastic Optimization.

- Duchi, J., et al. (2011). Adaptive Subgradient Methods for Online Learning and Stochastic Optimization.

- Tieleman, T., and Hinton, G. (2012). Lecture 6.5 – RMSProp, Coursera: Neural Networks for Machine Learning. (If someone has a link/PDF for the slides, I’d be grateful!)

- Loshchilov, I., and Hutter, F. (2019). Decoupled Weight Decay Normalization.