The field of artificial intelligence (AI) has seen explosive growth over the past two years, with its potential for future advancements appearing virtually limitless. However, with this rapid expansion comes a growing wave of challenges and risks. From AI-generated scams to deepfakes and data breaches, many people have either directly experienced or heard about the darker side of AI technology. This blog delves into the critical aspects of AI security and safety, exploring the threats posed by AI and the mechanisms we can use to prevent and mitigate them.

This blog will cover the the following AI aspects:

- AI Security and Safety and their relationship

- Technology landscape

- Key trends for the future

- Regulations

Security and Safety

AI security focuses on protecting AI systems from external attacks. For example, a hacker might use a prompt injection attack to manipulate the model into producing inappropriate outputs or leaking sensitive personal information (PII). On the other hand, AI safety addresses the prevention of harmful uses of AI systems. An example of a safety concern is a bad actor using AI to create deepfakes for fraudulent purposes.

AI security and safety are closely interconnected, with one often influencing the other. For instance, an AI security breach such as data poisoning—where malicious actors inject harmful data into a model—can undermine the safety of an application using that model. Conversely, an AI safety issue, such as inherent bias in a model, can be exploited by hackers to carry out attacks (e.g., using the model’s bias to impersonate or favor certain groups), thereby creating security vulnerabilities.

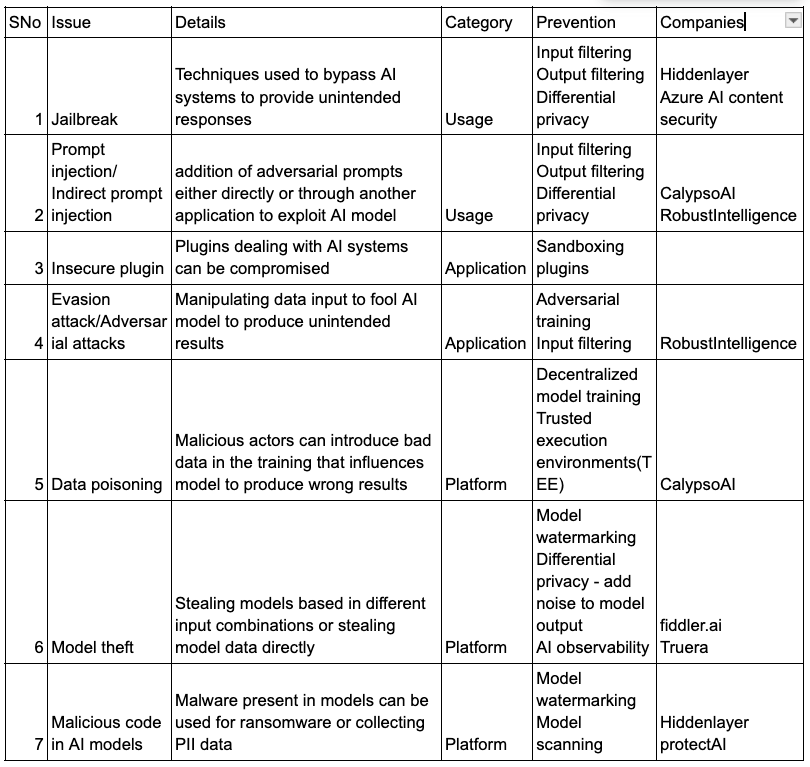

AI security summary

AI security builds upon existing cybersecurity practices, with specific enhancements tailored for AI systems. It can be categorized into three fundamental layers:

- Usage Security: This layer focuses on securing the interaction between users and AI systems. A common example is a jailbreak attack using prompt injection, where hackers craft malicious prompts to manipulate the model into generating inappropriate outputs or revealing sensitive data.

- Application Security: This layer addresses the security of AI applications, including the models themselves. Examples include indirect prompt injections or vulnerabilities in plugins that can compromise application integrity.

- Platform Security: This layer involves securing the underlying infrastructure of AI systems. For instance, in a data poisoning attack, malicious actors alter training data to manipulate model outputs. Other examples include model theft, where the intellectual property of AI models is stolen.

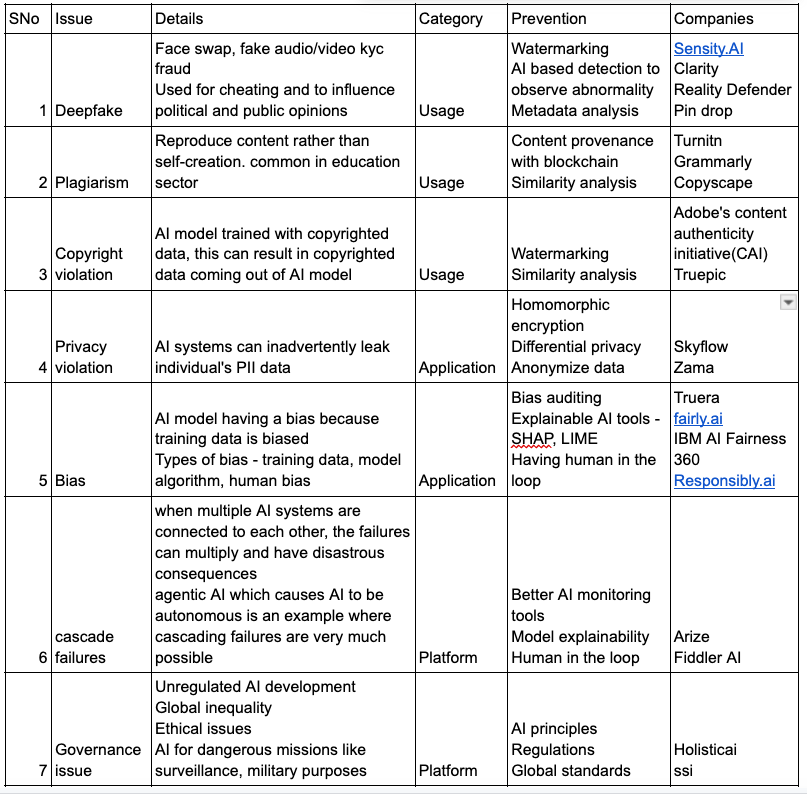

AI safety summary

While the prospect of AI surpassing human control is still a distant reality, there are several immediate AI safety concerns that must be addressed to ensure AI is used constructively rather than destructively. AI safety, like AI security, can be categorized into three layers:

- Usage Safety: This layer focuses on how AI systems are utilized by end users. Examples include deepfakes, plagiarism, and copyright violations. The proliferation of deepfakes, powered by advanced technologies like Generative Adversarial Networks (GANs), has made it increasingly difficult to distinguish between real and fabricated content, contributing to a negative perception of AI.

- Application Safety: This layer addresses safety risks associated with AI applications. Key examples include privacy infringement and bias in AI models, which can lead to discriminatory outcomes and ethical concerns.

- Platform Safety: This layer pertains to broader systemic and governance issues in AI deployment. Examples include the absence of regulatory oversight and the risk of cascade failures, where interconnected AI systems amplify small errors into significant failures.

Technologies used for AI security and safety

This is an evolving space that must adapt rapidly to keep pace with the latest AI trends.

- Usage: For AI security, techniques like input validation and filtering can help ensure that only sanitized data is fed into AI systems. For AI safety, approaches such as moderated outputs, bias auditing, explainable AI, and human-in-the-loop systems play a crucial role in ensuring responsible use.

- Application: Model watermarking is a valuable AI security measure to prevent model theft. For AI safety, techniques like differential privacy for safeguarding sensitive data and reinforcement learning with human feedback to align AI behavior with ethical standards are widely used.

- Platform: For AI security, leveraging technologies like blockchain, homomorphic encryption, and trusted execution environments (TEEs) enhances the integrity and confidentiality of AI systems. For AI safety, establishing robust governance frameworks and compliance tools is essential to mitigate risks and ensure ethical deployment.

AI systems require comprehensive monitoring and analysis to remain secure and reliable. Machine Learning Detection and Response (MLDR) uses machine learning to identify real-time threats and provide automated responses, enabling proactive and efficient risk management.

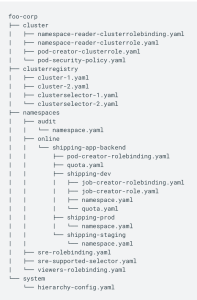

AI Security landscape

The companies provided are not an exhaustive list, it’s just a sample list.

AI safety landscape

The companies provided are not an exhaustive list, it’s just a sample list.

Key Trends for the Future

AI Watermarking

Watermarking is a critical technique for protecting content creators by ensuring ownership of their digital creations and mitigating issues like deep fakes. In the context of AI, two primary techniques are used for watermarking:

- Statistical Watermarking:

This method involves adding imperceptible data to AI-generated content, which can later be detected by specialized tools.- Example: For text-based models, specific word substitutions are made based on their probability. In images, certain pixel values are adjusted according to spatial or frequency domain rules.

- Audio Example: Frequencies beyond human perception are added to sound files.

- Machine Learning Watermarking:

Here, the AI model itself is modified to embed unique markers in its outputs, enabling easy identification of model-generated content.- Examples: Neural network-based watermarking, adversarial watermarking.

Challenges include resistance to tampering, ease of detection, and maintaining content quality.

Example in Action: Google SynthID embeds imperceptible watermarks into images produced by its AI models, and Gemini applies this to all GenAI outputs. Huggingface also offers open-source AI watermarking tools.circumvented by users or because users won’t prefer using chatgpt in that case.

Data Provenance

Data provenance involves tracking the origin and modifications of data. By embedding metadata into content or storing it externally on an immutable ledger like blockchain, we can ensure the integrity of data used in AI training and generation.

- Applications:

- Ethical AI training through verified datasets.

- Preventing copyright violations by ensuring proper attribution.

- Examples:

- Adobe Content Credentials and CAI: Adobe products attach provenance metadata to creations, and the CAI open standard enables cross-platform use.

- Initiatives like C2PA and Data Provenance Initiative aim to standardize these practices.

Explainable AI(XAI)

AI often functions as a “black box,” making it hard to verify if outputs are accurate or hallucinated. XAI bridges this gap by providing transparency and fostering trust.

- Key Techniques:

- Interpretable AI Models: Linking AI outputs to specific inputs and reasoning.

- LIME (Local Interpretable Model-Agnostic Explanations): Offers localized approximations for complex models.

- SHAP (Shapley Additive Explanations): Uses game theory to assess the contribution of model parameters.

Homomorphic encryption

Homomorphic encryption enables computations on encrypted data without needing decryption, ensuring privacy while processing sensitive information.

- Examples of Use: Medical data analysis, financial forecasting.

- Popular Libraries: Microsoft SEAL and Zama’s Concrete.

- Challenges: Performance overhead and complexity. Innovations are underway to make this technology more efficient.

Additionally, Zero Knowledge Proofs (ZKP) offer privacy-preserving mechanisms, such as proving ownership of a license without revealing personal details like age or ID number.

Blockchain and AI

Blockchain, with its decentralized and immutable ledger, offers transformative benefits for AI in several key areas:

Data Provenance: Blockchain enables the transparent tracing of data inputs used in model training, ensuring the integrity and security of the data. By maintaining a complete history of data modifications on the blockchain, it prevents tampering and builds trust in AI systems. Additionally, contributors of data can be rewarded through smart contracts, fostering ethical and transparent data sharing.

Example: Ocean Protocol facilitates data traceability and monetization in a decentralized manner.

Decentralized and Federated Learning: By distributing data and training processes across multiple nodes, blockchain supports decentralized or federated learning, which enhances data privacy and reduces risks associated with centralized storage.

Example: SingularityNET enables decentralized AI model training while maintaining data security and privacy.

Content Verification and Deep Fake Prevention: Blockchain can be used to track AI-generated content and the data that contributed to it. This traceability ensures accountability and helps combat issues like deep fakes by verifying content authenticity.

Example: Numbers Protocol and Adobe’s Content Authenticity Initiative (CAI) provide solutions for tracking and verifying AI-generated content.

Differential privacy

Differential privacy ensures that AI systems use input data without exposing individual details. By adding noise to data, it minimizes the risk of re-identification.

Adding/removing a single data point does not significantly affect model outputs.

Examples:

Google TensorFlow Privacy incorporates noise to protect sensitive information.

Human in the loop training(HILT) and Reinforced learning with human feedback(RLHF)

HILT integrates human oversight during both training and inference, ensuring models align with user needs.

RLHF fine-tunes models by using human feedback to develop reward systems, enhancing their performance and alignment with human values.

Examples: ChatGPT’s alignment process leverages RLHF for improving responses.

Regulations

AI regulations are still in their early stages, but they are essential for ensuring both the security and safety of AI systems. Effective regulations must strike a delicate balance—minimizing the potential harms of AI while fostering innovation. Different regions have adopted varying approaches to AI governance. For example, the European Union has implemented strict regulatory measures, while countries like the United States and the United Kingdom have opted for a more lenient or flexible approach. To ensure that AI development remains responsible and beneficial, a collaborative effort is required across nations, industries, and organizations. This global cooperation will help establish standardized guidelines and best practices, ensuring AI is developed and deployed safely, ethically, and effectively.

NIST AI risk management framework

The National Institute of Standards and Technology (NIST) has developed an AI Risk Management Framework to guide the design, development, and deployment of trustworthy AI systems. This framework focuses on accuracy, reliability, robustness, privacy, and security of AI systems.

EU AI act

This act classifies AI applications into unacceptable risk, high risk, limited risk, and minimal risk. For example, health care applications have a much higher risk and it has much higher AI regulations.