This is the sixth and final paper introducing the concepts of RDF and linked data, and explaining how these Semantic Web technologies can be used to publish library catalogue data.

The previous papers in this series, which serve as technical introductions to this paper, are:

Libraries and linked data #1: What are linked data?

Libraries and linked data #2: A rough guide to Turtle.

Libraries and linked data #3: Encoding bibliographic records in RDF.

Libraries and linked data #4: A comparison of RDF and XML.

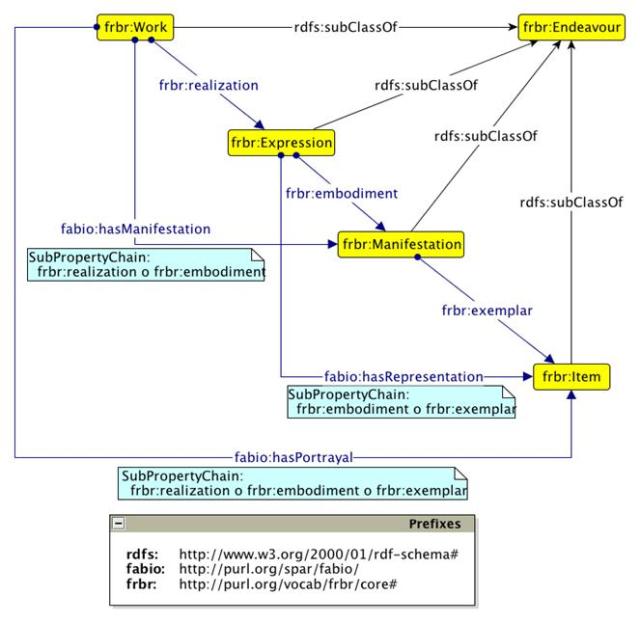

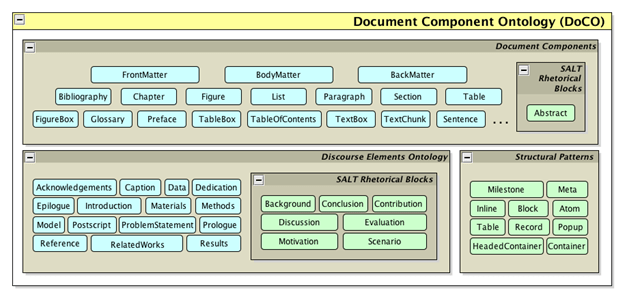

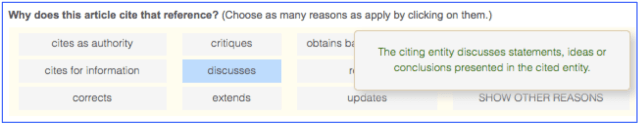

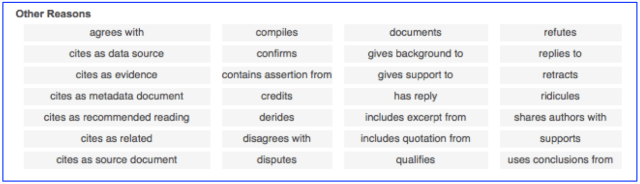

Libraries and linked data #5: Using the SPAR ontologies to publish bibliographic records.

This paper is based on a presentation given at the Ticer Librarians Summer School at the University of Tilburg, Netherlands, on 23rd August 2012.

Where are we today?

Scholarly publishing and librarianship are in the throes of a revolution, as the full potential of on-line publications and library catalogues are explored. Card catalogues have been replaced with OPAC systems, and libraries have embraced RFID technology for tracking physical holdings. But in many ways libraries are traditional, in part because of the inertia created by legacy cataloguing systems. In particular, libraries have not adopted Web standards for their metadata management, but rather continue to employ a variety of open or proprietary informational models based on XML or earlier standards such as MARC.

In contrast, modern web information management techniques employ standards such as RDF and OWL 2 to encode information in ways that permit computers to query metadata and integrate web-based information from multiple resources in an automated manner.

Thus, at this mid-point in the digital revolution, library practice is in an ill-defined transitional state—a ‘horseless carriage’ state—that lies somewhere between the world of print and paper and the world of the web and computers, with the former still exercising significantly more influence than the latter. We are now online – that is a significant start – but we should also be wholeheartedly adopting Web standards and contributing to the world of open linked data. We need to advance from the horseless carriage to the Ferrari!

Potential benefits of publishing library catalogues as open linked data

It is obvious that publishing the catalogues of major libraries as open linked data will permit their use in ways that will never be possible as long as they are kept in-house as MARC records.

If such data were available as open linked data in a triple store with a SPARQL query endpoint, they would be available to anyone, in a machine-processable format that could immediately be integrated automatically with similar data from other sources, rather than available only to human eyeballs via the library’s online catalogue (excellent as that might be).

For example, by recording the dates and geographical coordinates relating to ancient documents held by the Bodleian Libraries, and to the sites described in its archaeological publications, and by mapping these coordinates onto Google Maps or some other useful mapping system, together with similar data held at Cambridge University, it would be possible for a scholar from Sweden to see at a glance that Cambridge holds early descriptions of archeaological sites at Nimrud and Nineveh in ancient Mesopotamia, together with a large number of Mesopotamian written documents dating back 3000 years, while the Sackler Library in Oxford is particularly rich in papyri and information about Egyptian archaeological sites, while also having good holdings on cuneiform languages and Assyrian reliefs.

However, in reality, it is impossible to predict how such open data might actually be used. As Rufus Pollock famously said

“The best thing to do with your data will be thought of by someone else”.

In 2001, Tim Berners-Lee [1] predicted that the semantic web

“. . . will likely profoundly change the very nature of how knowledge is produced and shared, in ways that we can now barely imagine.”

Additionally, in his technical paper on linked data, co-authored by Chris Bizer and Tom Heath, and entitled Linked Data – The Story So Far [2], Tim Berners-Lee concludes:

“Linked Data principles and practices have been adopted by an increasing number of data providers, resulting in the creation of a global data space on the Web containing billions of RDF triples. Just as the Web has brought about a revolution in the publication and consumption of documents, Linked Data has the potential to enable a revolution in how data is accessed and utilised. . . . Linked Data realizes the vision of evolving the Web into a global data commons, allowing applications to operate on top of an unbounded set of data sources, via standardised access mechanisms. . . . We expect that Linked Data will enable a significant evolutionary step in leading the Web to its full potential.”

Examples of major users of open linked data

The BBC

The BBC is one of the largest corporations now totally committed to using RDF to store information. The BBC’s World Cup 2010 website used a high-performance dynamic semantic publishing framework underpinned by RDF and appropriate ontologies, providing far deeper and richer use of content than could have been achieved through traditional publishing solutions. Similarly, the BBC Music website is built on Linked Data and RDF, and provides a RESTful API for querying its data, and the entire BBC Natural History web site is powered by RDF, with its own Wildlife Ontology. The BBC got to this place by hiring bright people who have relevant semantic web skills.

The following diagram shows part of the linked data world, taken from the linked data cloud diagram prepared in 2010 by Richard Cyganiak. More recent versions of the diagram are too cluttered to see the details, because of the ongoing growth of the web of linked data. However, this one shows clearly how BBC Music, by making its descriptions of music, artist, orchestras and bands freely available online in RDF, has become a global resource to which others are linking.

Nature Publishing Group

On 4th April 2012, the Nature Publishing Group published as open linked data the bibliographic records of all their journal articles dating back to 1869. The following is taken from their press release describing this:

“Nature Publishing Group (NPG) today is pleased to join the linked data community by opening up access to its publication data via NPG’s Linked Data Platform, available at http://data.nature.com. The platform includes more than 20 million Resource Description Framework (RDF) statements, including primary metadata for more than 450,000 articles published by NPG since 1869. These datasets are being released under an open metadata license, Creative Commons Zero (CC0), which permits maximal use/re-use of this data.

“NPG’s platform allows for easy querying, exploration and extraction of data and relationships about articles, contributors, publications, and subjects. Users can run web-standard SPARQL Protocol and RDF Query Language (SPARQL) queries to obtain and manipulate data stored as RDF. The platform uses standard vocabularies such as Dublin Core, FOAF, PRISM, BIBO and OWL, and the data is integrated with existing public datasets including CrossRef and PubMed.

“NPG is delighted to be able to surface data on published articles from Nature and many other journals, going back to 1869,” said Jason Wilde, Business Development Director, NPG. “Linked data is an important next step in the evolution of scientific publishing and, over the coming months, we hope to be able to expose more meta-data on our content to enrich the semantic web.”

“Linked data refers to the publishing of structured data that is linked to other related data. It allows users to query, explore and link data from datasets across the web. NPG joins governments from around the world and other organizations including the British Library, the New York Times and the Open University, in providing a linked data platform.”

RDF library catalogues – what are others doing?

WorldCat

WorldCat is the largest online public access catalogue (OPAC) in the world, providing access to a global network of library content and services. It is run by OCLC (Online Computer Library Center, Inc.), a non-profit membership computer library service and research organization dedicated to the public purposes of furthering access to the world’s information and reducing information costs, founded in 1967 as the Ohio College Library Center. WorldCat presently contains ~20 million records, and its Search API provides access to a FRBR-ized set of WorldCat bibliographic records and holdings.

In June 2012, OCLC dramatically increased its exposure of linked data resources by making a downloadable linked data set for 1.2 million WorldCat resources available in this form. The WorldCat.org bibliographic metadata has been created using simple Schema.org mark-up and library vocabulary extensions.

On 6 November 2012, OCLC announced that the National Library of Poland (Biblioteka Narodowa) will add 1.3 million Polish library records to WorldCat, enriching the world’s largest resource for discovery of library materials and increasing the visibility of these collections for researchers around the world. These entries will become available as linked data.

The British Library

The British Library has recently published an open version of the British National Bibliography which it is making available in RDF as Linked Open Data. The initial offering includes published books and serial publications published or distributed in the UK since 1950.

Examples of its encoding, formatted in RDF/XML including some terms from the preliminary version of RDA, is available for books and serials. A subset of the entries in the BNB serials RDF/XML example converted into Turtle format is available here.

The Open Library

The Internet Archive‘s Open Library is a Wikipedia-like open, editable library catalogue, that aims to create a web page for every book ever published. To date, it has over 20 million records. Open Library has an open RESTful API, that permits Open Library data to be programmatically obtained in a variety of formats including RDF.

How to get from existing catalogues to linked data?

Perhaps the first thing to say is that the metadata fields required to permit resource discovery using linked data are not extensive, and should as far as possible use terms from widely-used vocabularies such as Dublin Core and FOAF, supplemented as appropriate by terms from more specialized bibliographic ontologies such as BIBO or the SPAR Ontologies. For example, the RDF metadata provided for the British National Bibliography is reasonably straightforward, involving:

- provision of authors’ names, title and abstract as marked up text strings;

- use of concepts such as agent, label, language, location, name, publication start date, and subject to describe bibliographic entities;

- specification of identifies such as ISSN and internal British National Bibliography ID numbers;

- specification of the nature of the bibliographic resource, e.g. periodical;

- description of subject categories defined by the Dewey Decimal Classification schema and Library of Congress Subject Headings (already present in the British Library cataloguing data).

Thus, if one’s internal catalogue data are in good shape – and academic library cataloguers are renowned for the attention to detail with which their catalogues are curated – and are also available programmatically through an API, it should be a fairly straightforward (a) to specify the metadata items one wishes to expose as open linked data, and (b) to create a script that will automatically convert from the existing format to RDF.

An example is the conversion of the records of the National Library of Sweden, that have been online since 1997. That library opened its catalogue as open linked data in 2008, as described in [3], [4] and [5].

Other examples of mapping from non-RDF bibliographic metadata to RDF are given by our recent mappings of DataCite XML metadata to RDF [6] and, separately, of JATS (the Journal Article Tag Suite) XML markup to RDF [7].

The future of the MARC 21 cataloguing standard, and the potential for the FRBR-based RDA (Resource Description and Access) to become the new standard for library cataloguing is a quite separate topic, beyond the expertise of this writer and the scope of the papers in this series. However, the following sources are of relevance [8-11].

References

[1] Berners-Lee, Tim and Hendler, James (2001). Scientific publishing on the ‘semantic web’. Nature 410: 1023-1024. http://www.nature.com/nature/debates/e-access/Articles/bernerslee.htm.

[2] C. Bizer C, T. Heath T and Berners-Lee T (2009) Linked data—the story so far. International Journal on Semantic Web and Information Systems 5 (3): 1–22. http://eprints.soton.ac.uk/271285/1/bizer-heath-berners-lee-ijswis-linked-data.pdf.

[3] Anders Söderbäck (2009). Why libraries should embrace Linked Data. National Library of Sweden presentation, available from http://code4lib.org/files/LIBRIS_code4lib.pdf.

[4] Martin Malmsten (2008). Making a Library Catalog Part of the Semantic Web. In: 8th International Conference on Dublin Core and Metadata Applications (Berlin): Metadata for semantic and social applications. pp 146-152. Available from http://libris.kb.se/resource/bib/11306748.

[5] Martin Malmsten (2009). Exposing Library Data as Linked Data. Available from http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.181.860&rep=rep1&type=pdf.

[6] Shotton D (2010). Revising the DataCite2RDF Mapping Document. http://opencitations.wordpress.com/2012/12/04/revising-datacite2rdf-mapping/

[7] Peroni S, Lapeyre DA and Shotton D (2012) From Markup to Linked Data: Mapping NISO JATS v1.0 to RDF using the SPAR (Semantic Publishing and Referencing) Ontologies. Proc. 2012 JATS Conference, National Library of Medicine, Bethesda, Maryland, USA, 16-17 October 2012. http://www.ncbi.nlm.nih.gov/books/NBK100491/.

[8] Coyle, Karen (2012). Linked Data Tools: Connecting on the Web. Library technology report for ALA TechSource. Available from http://www.alatechsource.org/taxonomy/term/106/linked-data-tools-connecting-on-the-web.

[9] Cronin, C. (2011). Will RDA mean the death of MARC? University of Chicago paper. Available from http://chicago.academia.edu/ChristopherCronin/Talks/33602/Will_RDA_Mean_the_Death_of_MARC_The_Need_for_Transformational_Change_to_our_Metadata_Infrastructures.

[10] JISC mailing list https://www.jiscmail.ac.uk/cgi-bin/webadmin?A0=DC-RDA has an online discussion on issues surrounding RDA, including encoding Marc21 in RDF.

[11] Kiorgaard, D. (2006). RDA and MARC21. Library of Congress paper, available from http://www.loc.gov/marc/marbi/2007/5chair12.pdf.