London based software development consultant

- 678 Posts

- 93 Comments

11·3 days ago

11·3 days agoWhat the article is talking about is how AI is a multiplier, and to get benefits from using it, rather than detriments, you need to get your house in order first:

The Widening AI Value Gap report by BCG found a similar result from a different angle, where 74% of companies struggle to scale AI value, with only 21% of pilots reaching production. The other 5% generating real returns had first built fit-for-purpose technology architecture and data foundations. This suggests that the problem is not AI but the underlying infrastructure to which it is being added.

AI scales the groundwork; teams that successfully adopt AI typically already have solid foundational practices in place, while those lacking them struggle to get value from their AI investments.

2·5 days ago

2·5 days agoCheck against Can I Use, all of the APIs, except for the following are supported by major browsers:

- Synchronous Clipboard API only Safari has full support, the rest have partial

- Temporal only currently supported in Chrome and Firefox

6·6 days ago

6·6 days agoThe fact that people even bring javascript as the backend is a bit crazy to me.

To clarify do you mean replacing JavaScript just on the backend? This article is about using JavaScript on the front end.

2·6 days ago

2·6 days agoI’m intrigued, what would you replace it with?

What are your thoughts on this 2023 comparison?

So to confirm, you don’t trust blogs where the company is selling a product or service, even if they don’t mention it in the article? If so, that would cover a lot of articles shared on this instance.

For what? I don’t see any products or services being promoted in this article.

People who care about performance are using loops

Well that depends, generators are faster than loops when you’re using Bun or Node.

1·9 days ago

1·9 days agoThe conclusion aligns with my own belief, which is that it’s better to create a minimal context by hand than get agents to create it:

We find that all context files consistently increase the number of steps required to complete tasks. LLM-generated context files have a marginal negative effect on task success rates, while developer-written ones provide a marginal performance gain.

When I have got Claude to create a context, it’s been overly verbose, and that also costs tokens.

However in this case the opposite is true, as Chromium currently doesn’t support this feature.

Do you mean features only currently available in Chrome?

Well spotted, the article states:

The :heading pseudo-class is currently available in nightly builds only. You can test it now in:

- Firefox Nightly (behind a flag)

- Safari Technology Preview

There are some really good tips on delivery and best practice, in summary:

Speed comes from making the safe thing easy, not from being brave about doing dangerous things.

Fast teams have:

- Feature flags so they can turn things off instantly

- Monitoring that actually tells them when something’s wrong

- Rollback procedures they’ve practiced

- Small changes that are easy to understand when they break

Slow teams are stuck because every deploy feels risky. And it is risky, because they don’t have the safety nets.

It"s working now

3·11 days ago

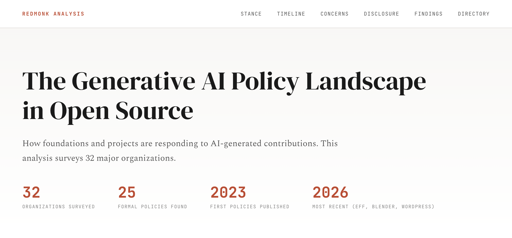

3·11 days agoI think there’s many solutions to this, including setting a minimum account age to accept pull requests from, or using Vouch.

There’s a great rebuttal to Shumer’s post, Why I’m not worried about AI job loss.

2·14 days ago

2·14 days agoGuys, can we add a rule that all posts that deal with using LLM bots to code must be marked? I am sick of this topic.

How would you like them to be marked? AFAIK Lemmy doesn’t support post tags

I try to stay well read on AI, and I regularly use Claude, but I’m not so convinced by this article. It makes no mention of the bubble that could burst. As for the models improving aren’t the improvements slowing down?

More importantly, the long term effects of using AI are still unknown, so that for reason the adoption trajectory could be subject to change.

The other factor to consider is that the author of this article is a big investor in AI. It’s in his interest to generate more hyperbole around it. I have no doubt that generative AI will forever change coding, but but I have my skepticism about other areas, especially considering the expensive controversy of Deloitte using AI to write reports for the Australian government.

I find articles like this about agentic engineering both compelling and anxiety inducing. It’s staggering what people are achieving with agents toiling away in the background. However, it also leaves me feeling insecure, as I have many ideas that I would love to build, and previously my excuse was a lack of time, and now I worry I have no excuse when an agent could potentially build it whilst I sleep.

I wonder if we’ll end up in a situation of open source projects with closed source tests. Though I don’t know how that would work, because how would you contribute a new feature if the tests are closed? 🤔