Introduction

In the dice game “Biscuits,” a player rolls 12d6+1d8+1d10+1d12, then selects one or more of the rolled dice to set aside, rolling the remainder again, continuing this process until eventually all 15 dice have been set aside. The player’s score is the sum of the final values shown on the dice, with the highest score winning the game.

A rolled six on a d6– or an eight on the d8, or generally the maximum value on a die– is referred to as a biscuit… and as the rules suggest, “you want biscuits and lots of them!”, where “sometimes you’ll roll several biscuits all at once, you can set them all aside.”

You can set them all aside… but should you? That is, suppose that you roll sixes on multiple d6s at once, but relatively small values for the d8, d10, and/or d12. Should you set aside all of those biscuits, banking the highest possible score for those dice, or is it worth it to “give up” some of that guaranteed score in exchange for more future opportunities to roll better scores for the d8, d10, and d12?

Results

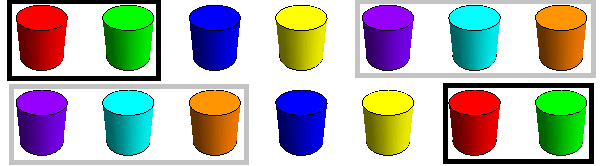

Not to beat around the bush, the latter turns out to be the case. Strategy in this game appears to be pretty interesting; let’s suppose that our objective is to maximize our expected total score (more on this later). Python code is available on GitHub to evaluate all possible moves that we might make from any given roll. For a most extreme example situation, suppose that on the initial roll we get biscuits for all 12d6, but we roll 1 for each of the other dice:

>>> analyze(((0, 0, 0, 0, 0, 12),

(1, 0, 0, 0, 0, 0, 0, 0),

(1, 0, 0, 0, 0, 0, 0, 0, 0, 0),

(1, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0)))

93.836 points by keeping d6 (6 ),

93.761 points by keeping d6 (6 6 ),

93.691 points by keeping d6 (6 6 6 ),

93.625 points by keeping d6 (6 6 6 6 ),

93.565 points by keeping d6 (6 6 6 6 6 ),

93.509 points by keeping d6 (6 6 6 6 6 6 ),

93.462 points by keeping d6 (6 6 6 6 6 6 6 ),

93.449 points by keeping d6 (6 6 6 6 6 6 6 6 6 6 6 6 ),

93.427 points by keeping d6 (6 6 6 6 6 6 6 6 6 6 6 ),

93.426 points by keeping d6 (6 6 6 6 6 6 6 6 ),

93.407 points by keeping d6 (6 6 6 6 6 6 6 6 6 ),

93.407 points by keeping d6 (6 6 6 6 6 6 6 6 6 6 ),

87.292 points by keeping d6 (6 6 6 6 6 6 6 6 6 6 6 6 ), d8 (1 ),

87.167 points by keeping d6 (6 6 6 6 6 6 6 6 6 6 6 ), d8 (1 ),

87.064 points by keeping d8 (1 ),

87.037 points by keeping d6 (6 6 6 6 6 6 6 6 6 6 ), d8 (1 ),

...

Optimal strategy is to keep just a single d6, sacrificing all 11 other biscuits in exchange for more future rolls to hopefully improve the contribution from the d8, d10, and d12. Even more interesting is that the expected total score isn’t monotonic in how many biscuits we might choose to set aside: keeping one d6 is best, and keeping all 12 is worse… but not as bad as keeping just 10 of them.

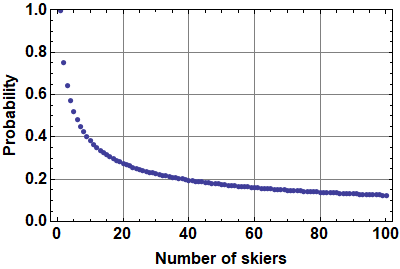

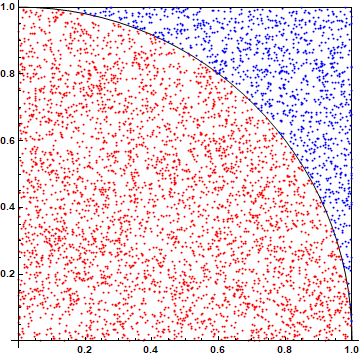

The figure below shows the distribution of possible total scores using this strategy, sampled from 10,000 simulated games.

I was surprised at how well this strategy performs, with an expected score of about 93.9 (shown in red), and in a few simulated games even reaching the maximum possible score of 102.

Implementation

As discussed previously here, we can efficiently enumerate all possible outcomes from rolling a given subset of the dice as weak compositions of the total number of dice of each type. To compute optimal strategy for a given roll, we consider all possible non-empty subsets of dice to keep, recursively evaluating the expected score of each such move, memoizing results as we go.

As an aside, the Python code was not the starting point. Quite often I find it useful to do my “thinking” in Mathematica, then convert the resulting algorithm into a more widely accessible form. It’s always interesting to see how much more concise– but often much less readable– the Mathematica implementation usually is, as shown below.

rolls[n_, d_] := Map[

Differences[Append[Prepend[#, 0], n + d]] - 1 &,

Subsets[Range[n + d - 1], {d - 1}]

]

score[ns_, ds_] := score[ns, ds] = Total@Map[

(Times @@ Multinomial @@@ #) Max[0, Map[

Total[Total /@ (Range[ds] #)] + score[ns - (Total /@ #), ds] &,

Rest[Tuples[Tuples /@ Range[0, #]]]

]] &,

Tuples[MapThread[rolls, {ns, ds}]]

] / Times @@ (ds^ns)

score[{12, 1, 1, 1}, {6, 8, 10, 12}]

Open questions

I think there are a lot of interesting additional questions to pursue here. For example, is there a concise description of optimal strategy, or even an approximation of it, that a human player can implement with simple mental calculation?

Can the calculation of optimal strategy be made more efficient? Interestingly, both the Mathematica and Python implementations took about a day and a half to execute on my laptop. Add any more dice and things would get out of hand.

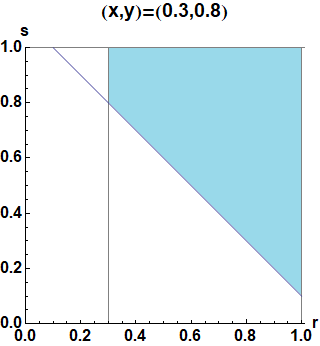

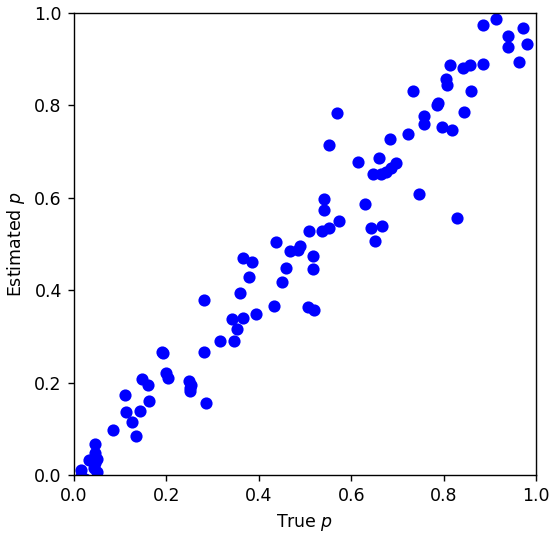

Finally, the strategy analyzed above is only optimal in the sense of maximizing expected score. This is not the same thing as maximizing the probability of beating another player. Similar to the approach utilized here, ideally we would evaluate the second player’s strategy first, with the utility function parameterized by the first player’s hypothetical final score. Then, once we know all of the possible expected values for the second player, we could compute the first player’s optimal strategy and overall value of the game, using the (negated) second player’s expected returns as the utility function. How different do the resulting strategies look as a result, compared with maximizing expectation?