Introduction

Despite my nephews and nieces growing older, it seems like I continue to encounter mathematically interesting children’s games. I recently played Gobblet Gobblers, a deceptively complex variant of Tic Tac Toe marketed for “ages 5 and up.”

The rules are simple:

- Like Tic Tac Toe, the game is played on a 3×3 board, with the winner being the first player to get 3 of their pieces in a row, column, or diagonal.

- Each player starts with 6 pieces: 2 pieces in each of 3 sizes. Each piece has a hollow dome shape, so that a smaller piece may be covered by a larger piece played on top of it.

- At each player’s turn, they may either play a new piece, or move one of their pieces already on the board (possibly uncovering a smaller piece underneath).

- Whether playing a new piece or moving one already played, the piece may be played/moved either to an empty square, or over a smaller piece (of either player’s) already on the board.

The neat wrinkle is being able to move pieces around without having to add a new piece to the board with each move. This not only increases the game’s state space complexity– there are 341,024,631 possible states up to board and player symmetry– but also complicates a straightforward minimax search, which must deal with the possibilities of repeated game states, and game tree paths hundreds of moves deep even without ever repeating a game state.

This post describes my approach to computing optimal strategy using retrograde analysis— I wasn’t familiar with this term from the chess world, I would have just called it dynamic programming with some extra sauce. The C++ implementation is on GitHub, with which you can play the game, show optimal moves for either player at any point in the game, and “rewind” to experiment with alternate playouts.

Playing the game and its variants

When you run the program, you are first asked for three parameters specifying the supported rule variations:

- The number of piece sizes (1, 2, or 3)

- The number of pieces of each size (1 to 9)

- A 0-1 Boolean indicating whether pieces may be moved after initially played.

For example, (3,2,1) corresponds to Gobblet, while (1,5,0) corresponds to plain old Tic Tac Toe, as shown below:

Enter rules (num_sizes, num_per_size, allow_move): 1 5 0

Searching... found 765 states.

Solving... solved 614 win/loss states.

| |

| |

0| 1| 2

------|------|------

| |

| |

3| 4| 5

------|------|------

| |

| |

6| 7| 8

Player 1, enter move (-size | start, end), or (0, 0) for best move, or (-1, -1) to undo move: -1 4

Solving Tic Tac Toe is trivial and quick, and most other rule variants take at most a couple of minutes to “think” before beginning the game. But Gobblet (3,2,1) is by far the most complex variant, taking about 25 minutes on my laptop to compute optimal strategy… but that’s a one-time cost, and includes saving the strategy to disk, so that you can quickly reload it to play again later without having to sit through the calculation again.

A move is specified by a pair of integers, indicating the piece to play-or-move and where to move it, with non-negative values 0 through 8 indicating a square on the board as labeled above. A negative value (-1, -2, or -3) indicates playing a new piece of the corresponding negated size. Thus, the example move (-1, 4) above indicates Player 1 (X) starting the game by playing in the center square.

Hidden pieces

Although technically a game of perfect information, in practice there is a memory component: as the rules warn, “The first player to align 3 pieces in a row wins, so when you wish to move a piece try to remember what is under it!” The displayed board preserves this practical difficulty, only showing the topmost piece in each square, indicating its owner (X or O) and size (1, 2, or 3). In the example below, O responds to X’s opening move by covering the smaller X1 with their “medium” sized piece O2:

Enter rules (num_sizes, num_per_size, allow_move): 3 2 1

Loading from gobblet_3_2_1.dat

| |

| |

0| 1| 2

------|------|------

| |

| |

3| 4| 5

------|------|------

| |

| |

6| 7| 8

Player 1, enter move (-size | start, end), or (0, 0) for best move, or (-1, -1) to undo move: -1 4

| |

| |

0| 1| 2

------|------|------

| |

| X1 |

3| 4| 5

------|------|------

| |

| |

6| 7| 8

Player 2, enter move (-size | start, end), or (0, 0) for best move, or (-1, -1) to undo move: -2 4

| |

| |

0| 1| 2

------|------|------

| |

| O2 |

3| 4| 5

------|------|------

| |

| |

6| 7| 8

Optimal strategy

Gobblet is a win for the first player in 13 moves (plies), meaning that optimal strategy does indeed involve moving some pieces around the board. X’s first move can be anything but a medium piece (“X2”); the only caveat is that X3 to an edge, or X1 to the center or corner, allows the second player O to delay losing for 15 moves instead of 13.

(There is a Racket version of the game, with a much better user interface, that appears to implement optimal first player strategy in its “smart mode,” but seemingly only for its specific choice of principal variation, beginning with X3 to the center. For example, manually playing X1 to the center instead, then using this implementation’s optimal strategy for the second player– enter 0 0 for the move at any point to see a best move– against subsequent Racket auto-play moves for the first player, results in a win for O.)

If we prohibit moving pieces already played– that is, rule variant (3,2,0), with the next largest space complexity of 148,599,441 states– then the game is a draw, where X’s first move must be either X3 to the center or X1 anywhere.

The only other rule variants that are not a draw are (2,3,0) and (2,3,1)– that is, still six pieces per player, but in two sizes of three pieces each instead of the other way around– both of which are also first player wins, in 9 and 11 moves, respectively.

Game state bitboards

This was a really fun programming exercise. If there is any moral to this story, I think it is to remember that the standard library was written for everyone’s problems, not for your problem.

We use a std::uint64_t to represent each game state as a 54-bit bitboard: each of the 3×3=9 squares requires 6 bits, 2 bits for each piece size (332211), indicating whether there is a piece of that size in the corresponding square:

- 00 indicates no piece of that size.

- 01 indicates a piece of the player to move.

- 10 indicates the opponent’s piece.

Gobblet is symmetric, allowing us to reduce the size of the state space by “swapping seats” after each move, so that the state is always interpreted with respect to the player to move next. We can further reduce the number of states by roughly another factor of 8 with the usual trick of rotating and reflecting the board, and using the minimum value among all resulting states.

I think I got nerd-sniped here a bit: rotating the board was awkward using this bitboard encoding, and renumbering the squares to make rotations simpler just made reflections even worse. I finally settled instead on alternating between two reflections– one about the middle row, the other about the off-diagonal– chosen to minimize the number of “shift-and-mask” bitwise operations in each.

As a first ding on the C++ standard library, I found it interesting that the final implementation, shown below, is different at all, let alone noticeably faster, than what I initially started with using the clearer min_s = std::min(min_s, s):

State canonical(State s)

{

State min_s = s;

s = flipud(s); min_s = s < min_s ? s : min_s;

s = antitranspose(s); min_s = s < min_s ? s : min_s;

s = flipud(s); min_s = s < min_s ? s : min_s;

s = antitranspose(s); min_s = s < min_s ? s : min_s;

s = flipud(s); min_s = s < min_s ? s : min_s;

s = antitranspose(s); min_s = s < min_s ? s : min_s;

s = flipud(s); min_s = s < min_s ? s : min_s;

return min_s;

}

Chris Wellons’s MSI hash map

Retrograde analysis involves mapping every possible game state to its corresponding win/loss value (more on this below). To do this, I started out with a std::map (or std::unordered_map if you like), starting development with smaller versions of the game that executed more quickly, such as rule variant (2,2,1), with only 252,238 states, then on to variant (2,3,0), with 1,964,786 states.

But with this approach, available memory was dwindling fast. At the time, before I knew Gobblet’s state space complexity exactly, a reasonable back-of-the-envelope estimate was

or well over 300 million states. I just couldn’t afford the overhead of red-black tree pointers, node colors, or hash bucket linked list pointers in the standard library’s map implementations.

Enter the “mask, step, index” (MSI) hash map described by Chris Wellons [1]. This worked like a charm, being not just lean but fast. Here is the entire implementation, in place of std::map, squeezing all 2.5 GB worth of game states into a 4 GB array:

const int HASH_EXP = 29;

const std::size_t HASH_MASK = (1ull << HASH_EXP) - 1;

const State STATE_EMPTY = 0x3; // 0x0 is the (valid) initial board state

const State STATE_MASK = (1ull << 54) - 1;

std::vector<State> hash_map(HASH_MASK + 1, STATE_EMPTY);

// Return pointer to hash map entry for given game state.

State* lookup(State s)

{

std::uint64_t h = hash(s);

std::size_t step = (h >> (64 - HASH_EXP)) | 1;

for (std::size_t i = h;;)

{

i = (i + step) & HASH_MASK;

if (hash_map[i] == STATE_EMPTY || (hash_map[i] & STATE_MASK) == s)

{

return &hash_map[i];

}

}

}

That’s it. Or almost– we also need the hash(s) function used to compute the starting index and step size when skipping past collisions. I ended up using SplitMix64, which I was expecting to be an overly big hammer, but turned out to result in significantly faster execution than all of the simpler hashes that I tried. I wonder if this is due to the rather heavy loading (we’re using over 63.5% of the allocated space)?

At this point, we really have just a hash table. We need a hash map, from the 54-bit state as the key, to the win-or-lose value of the game played optimally from that point. This is where the STATE_MASK above comes in: we will pack the not-yet-computed value for the 54-bit state key into the remaining upper 10 bits of the std::uint64_t.

Retrograde analysis

Which brings us, finally, to the algorithm for computing the values of all possible game states, implicitly also computing the optimal strategy from any state (by choosing the move that leads to the highest value state). To do this, we map each game state to an ordered pair (value, moves), where value indicates +1 for a win, -1 for a loss, or 0 for a draw for the player to move, assuming optimal play from that point; and moves indicates the “depth to win,” or number of moves (plies) from that point to the end of the game assuming optimal play.

We “solve” for these values using retrograde analysis, starting with the terminal win or loss states that are initialized with their endgame value and moves=0, and working backward from there, identifying immediately previous game states and propagating solved win/loss values, incrementing moves accordingly.

In the process, I ended up using a slightly weird encoding of (value, moves) into the upper 10 bits of the std::uint64_t for each game state. Consider the function to compute the best move from a given game state:

Move best_move(State s)

{

Move best{};

State max_next = 0;

for (auto& m : get_moves(s))

{

State next = *lookup(canonical(swap(move(s, m))));

if (next > max_next)

{

max_next = next;

best = m;

}

}

return best;

}

In other words, optimal strategy is simply to choose the move leading to the state with the largest possible std::uint64_t value, which is in turn dominated by the (value, moves) pair encoded in the upper 10 bits. The value is in the highest 2 bits, and the moves are in the lower 8 bits… but negated (and offset by one) using two’s complement for losses. That is:

- 0100000000 indicates (+1, 0), an immediate win for the player to move.

- 0100000001 indicates (+1, 1), the player to move can win in 1 move.

- 0100000010 indicates (+1, 2), the player to move can win in 2 moves.

- …

- 10######## indicates (0, #), game is a draw.

- …

- 1111111101 indicates (-1, 2), the player to move will lose in 2 moves.

- 1111111110 indicates (-1, 1), the player to move will lose in 1 move.

- 1111111111 indicates (-1, 0), an immediate loss for the player to move.

If we are computing the best move by choosing from among these values as next possible states, the “player to move” is our opponent. Thus, the ideal move would lead to the largest value with high order bits equal to 1111111111, an immediate loss for our opponent– or barring that, a loss for our opponent in the fewest possible moves. If we can’t guarantee a win, then we want to force a draw. And if we can’t force a draw, then our opponent has a winning strategy, but we want to delay the win for as many moves as possible.

Reference:

- Wellons, C., The quick and practical “MSI” hash table [HTML]

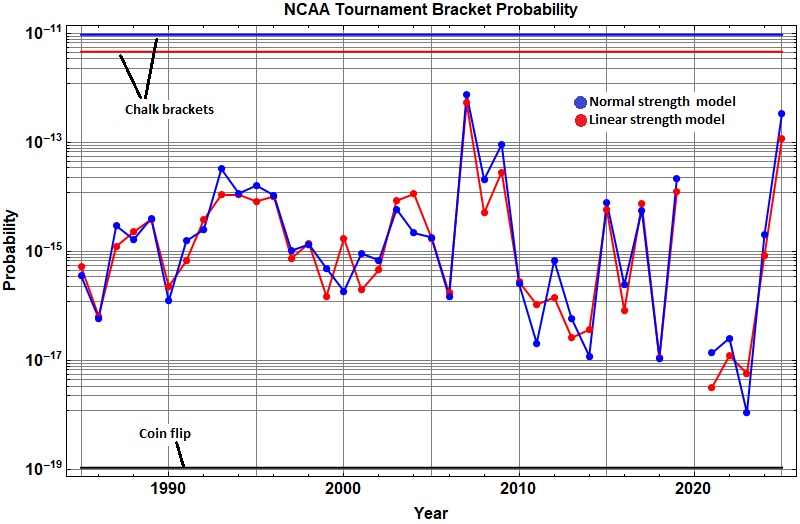

, the often-quoted “one in 9.2 quintillion” probability of correctly guessing all 63 games by simply flipping a coin. The blue and red lines at the top are probabilities of chalk brackets, using two different models of individual game probabilities as a function of difference in “strength” of each team. As discussed in more detail here, blue indicates a strength following a normal distribution density, and red indicates a simpler linear strength function.