Introduction

Let’s play a game: I will repeatedly flip a fair coin, showing you the result of each flip, until you say to stop, at which point you win an amount equal to the fraction of observed flips that were heads. What is your strategy for deciding when to stop?

This weekend 6/28 is “Two-Pi Day” (or Tau Day, if you like). Celebration in the classroom can take many forms: for example, we can write a program to calculate an approximation of the value of using Monte Carlo simulation. Or we can find some mathematical problem where the occurrence of

is surprising or unexpected. I think the game described above is an interesting case where we can do both at the same time.

Throwing darts

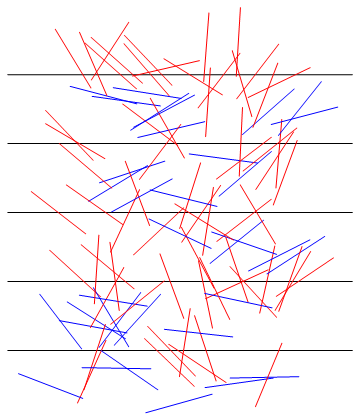

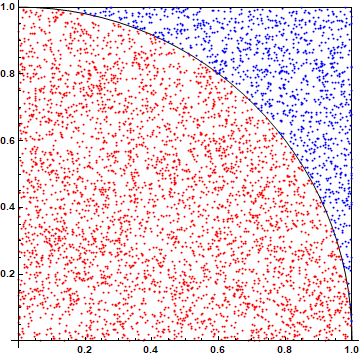

The most common approach to estimating using Monte Carlo simulation involves selecting random points uniformly distributed in a square; imagine throwing darts at a square dartboard, as shown in the figure below.

Let the indicator random variable equal 1 if a dart lands within the inscribed circle– or within the inscribed 90-degree circular sector shown above, which has the same area– otherwise

. Then

is the ratio of areas

, so that we can estimate

by Monte Carlo estimation of

, where

. In Python:

import random

def dart():

x, y = random.random(), random.random()

return 4 * int(x * x + y * y < 1)

n = 1000000

print(sum(dart() for _ in range(n)) / n)

Note how simple the simulation of dart() is, just a couple of random numbers and a few arithmetic operations. Indeed, this might seem perhaps too simple: we don’t really need the randomness of Monte Carlo simulation here, and could instead integrate over an evenly spaced grid of thrown darts. And despite the simple arithmetic implementation of the simulation, the “pi-ness” of the process– there is a circle clearly visible in the diagram– is rather unsurprising.

Throwing noodles

Another common approach to estimating is Buffon’s noodle. Imagine throwing uncooked straight spaghetti noodles, each one foot long, onto a floor marked with parallel lines spaced one foot apart, with the random variable

equal to the number of times a particular noodle crosses a line on the floor.

It turns out that . (Note this is an expected value, not just a probability, because it works even if we cook the noodles, so that

can take on non-negative integer values beyond just 0 and 1.) We can similarly estimate

by Monte Carlo simulation of this noodle-throwing process, appropriately transforming the resulting estimate of

. Again in Python:

import random

import math

def noodle():

y = random.random()

angle = math.pi * random.random()

angle_0 = math.asin(y)

return int(angle_0 < angle < math.pi - angle_0)

n = 1000000

print(2 / (sum(noodle() for _ in range(n)) / n))

As a method of computing an approximation of , this feels a bit circular (!) to me. We are using

math.pi, the very value we are trying to estimate, in the implementation of the simulation! (I’m also skipping over the error analysis, which is more complicated than for the other simulation approaches discussed here, due to the reciprocal in the estimate.)

Flipping coins

Coming back now to the Chow-Robbins coin-flipping game, unfortunately fully specifying an optimal strategy with maximum possible expected return (known to be approximately 0.793) remains a complex open problem. But let’s consider a strategy that, although it is suboptimal, is very simple to describe, still performs reasonably well, and turns out to have interesting behavior relevant to this discussion: let’s suppose that we stop when the number of heads first exceeds the number of tails

, so that we win

.

Then it’s a nice exercise to show that our expected return using this strategy is

So we can estimate in the same way we did with the dartboard. In Python:

import random

def coin_flips():

heads = 0

flips = 0

while heads <= flips - heads:

heads += random.getrandbits(1)

flips += 1

return 4 * heads / flips

total = 0

n = 0

while True:

total += coin_flips()

n += 1

print(f'Estimate after {n} iterations = {total / n}')

This works… sort of. There are a lot of interesting things happening here. First, the variance of our estimate is less than one-third that for a dart throw, so that we should need correspondingly fewer Monte Carlo iterations to achieve comparable accuracy.

Also, each individual coin flip is a lot less computationally expensive than each dart throw. The latter requires 128 random bits to generate two floating-point values for the position of a dart, while a coin flip is a single random bit. The ability and relative speed of extraction of a single random bit varies from language to language and library to library. For example, even sticking to Python, it’s interesting to compare the above

random.getrandbits(1) with the theoretically equivalent but much slower random.randint(0, 1).

But these potential benefits are dwarfed by the overall cost of each Monte Carlo iteration simulating a single playout of the game, which generally involves more than just a single coin flip. You may notice that the output from the above code scrolls by with not-really-blazing speed… but periodically there is a significant pause, sometimes for a long time.

The problem is that the expected number of coin flips– that is, the average duration of a single Monte Carlo iteration– is infinite. In other words, even if we know that we need, say, roughly 12 million iterations of the game to achieve 3 digits of accuracy in our estimate of , we can’t even estimate how long those 12 million iterations will take to execute.

All is not entirely lost, however. Note that although the expected duration of a game is infinite, the program above is never in any danger of being well and truly hung in the inner loop. That is, even during one of those long pauses, we are guaranteed— with probability 1– to observe eventual completion of the current game and subsequent starting of the next new game.

We are right on the razor’s edge of hanging, though. That is, suppose that we play the game with a biased coin, so that the probability of heads is . Then there is a positive probability

that a game will never end, with a meandering deficit of heads that is never overcome. (Which means that the endless loop through games above is guaranteed, with probability 1, to eventually encounter and get stuck on such a never-ending game.)

On the other hand, if , then the situation improves. For example, let’s suppose that we play the game with a biased coin with

. Then the expected return from this game is

, so we can still estimate

by playing this modified game:

import random

import math

def coin_flips():

heads = 0

flips = 0

while heads <= flips - heads:

heads += int(random.getrandbits(2) != 0)

flips += 1

return 2 * math.sqrt(3) * heads / flips

n = 120000

print(sum(coin_flips() for _ in range(n)) / n)

Better yet, not only is the expected number of coin flips (i.e., random bits) per game finite, it’s just 2 flips on average per game, so that each game is relatively fast to simulate. Better still, the variance is now less than 12% of the dartboard’s, so that we don’t even have to play as many games to achieve the same accuracy.