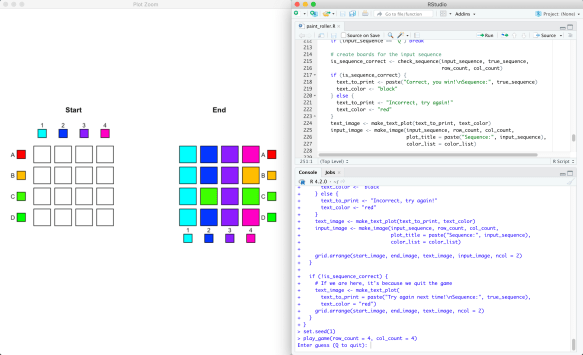

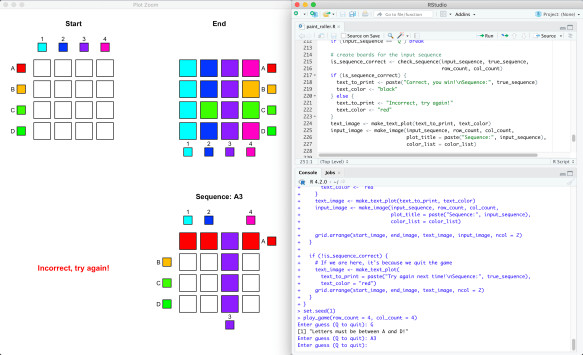

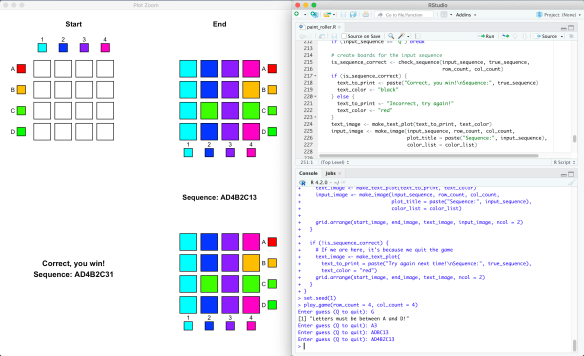

The Ford circles, introduced by L. R. Ford in his 1938 paper (Reference 1), are a family of circles that are all tangent to the -axis at rational points. For each rational number

with

, the Ford circle

is defined as the circle centered at

with radius

.

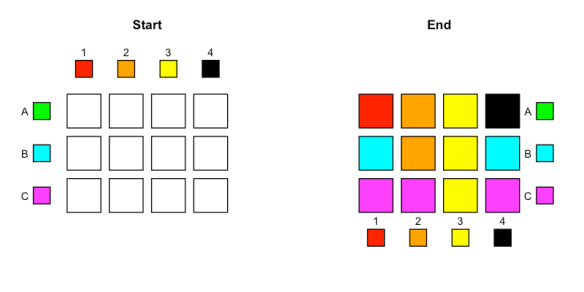

Plotting these circles reveals how special they are:

No two of the circles intersect at each other at two distinct points. More interestingly, we can characterize which circles are tangent to each other!

Theorem (Section 1 of Ford (1938)). Two Ford circles

and

are tangent to each other if and only if

.

The Ford circles are related to many different ideas in mathematics; I’ll just mention some of the properties I found interesting.

Theorem (Theorem 3 & 4 of Ford (1938)). If

is tangent to

, then all the circles tangent to

are

, where

takes on all integral values. In particular, across all possible values of

, exactly two have denominators numerically smaller than

, and these correspond to the two tangent circles that are larger than

.

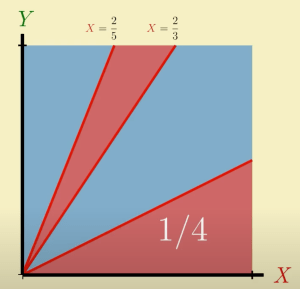

Consider the inequality

Dirichlet showed that:

- If

is irrational, then the inequality is satisfied by infinitely many fractions

for

, and

- If

is rational, then the inequality is satisfied by only finitely many fractions, no matter how large

is.

Ford used Ford circles to improve the result for irrational :

Theorem (Theorem 5 of Ford (1938)). If

, then for each irrational

there are infinitely many fractions

that satisfy

. However, if

, then there are irrationals

such that

holds for only finitely many fractions.

Ford circles are intimately connected with the Farey sequence: see References 1 & 3 for example.

Finally, the sum of the areas of the Ford circles involves the Riemann zeta function ! The result with a short proof is available in Wikipedia (Reference 2).

Theorem (Wikipedia). Let

be the total area of the Ford circles

. Then

.

References:

- L. R. Ford (1938). Fractions.

- Wikipedia. Ford circle.

- ThatsMaths. Ford Circles & Farey Series.