Conversation

Krovatkin

left a comment

Krovatkin

left a comment

There was a problem hiding this comment.

a few qqs to understand this PR a bit better.

| lr = 0.02 | ||

| momentum = 0.9 | ||

| weight_decay = 1e-4 | ||

| params = [p for p in self.model.parameters() if p.requires_grad] |

There was a problem hiding this comment.

out of curiosity, is this some kind of an optimization? wouldn't autograd ignore parameters don't require gradients?

There was a problem hiding this comment.

@Krovatkin I borrowed these default values from torchvision reference code: https://github.com/pytorch/vision/blob/main/references/detection/train.py#L77. These parameters seems to be default to torchvision maskrcnn training.

There was a problem hiding this comment.

I think we don't need this anymore, including in torchvision.

There was a problem hiding this comment.

Test failed when removing these parameters(https://app.circleci.com/pipelines/github/pytorch/benchmark/2710/workflows/26c92ded-69b3-4cdc-8c35-3cc4ab5f8e13/jobs/2793), ValueError: parameter group didn't specify a value of required optimization parameter lr so they are still needed.

|

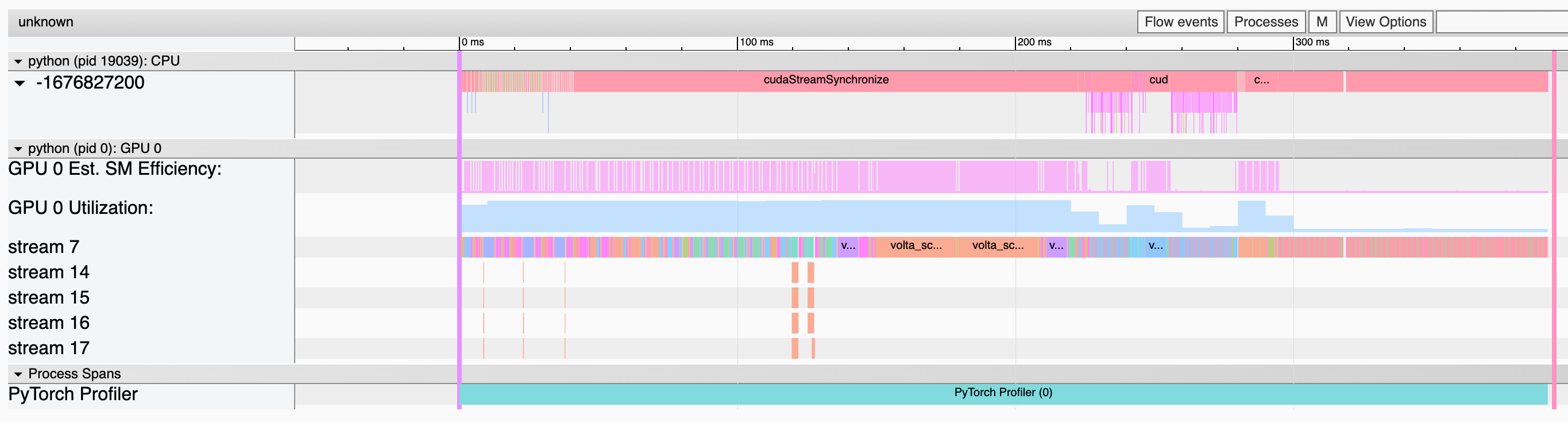

Hi @xuzhao9 I believe the slowdown during inference of mask r-cnn is due to this function Indeed, we perform many small operations in a for loop, which is not ideal for maximizing GPU utilization. I think we can further optimize this function at the Python-level by leveraging a single call to Also, ccing @datumbox as this could be a good task to optimize the torchvision function |

Question for model authors: This still doesnt' look great. Is this how the model is used in nature? What can be done here to improve utilization? Is this from a lack of prefetch from the second model? Its no good to have that idle section at the end. [edit: the whole inference looks right on the edge of underutilized, do we need a different way to run this model?] |

Right, sending off all these tiny shaders against independent data. Thats a perf error, causes dispatch to dominate. Can maybe be patched up by using cuda graphs, but really not good. Is this really how the model runs in nature? |

|

@eircfb I believe I've answered your questions in my answer just before, let me know if you have further questions. It's the post-processing steps that are the culprit in here, and they can definitely be optimized (without having to resort to custom CUDA kernels) This is normally how we use the model, yes. |

Right, we posted at same time. Isn't that loop of tiny dispatches just for the first section? What causes the low utilization towards the end of the trace? |

|

@eircfb I believe the section I pointed out should correspond to the last part of the trace (although to be 100% sure I would need to check the full trace with further info, @xuzhao9 can you send it to me?) For the first section with slowdown, I would need to check more carefully but my first guess would be that it comes from this section https://github.com/pytorch/vision/blob/0fa747eea4569f349fb0dacab44604b7b11c1a74/torchvision/models/detection/rpn.py#L253-L274 Maybe the slowdown could be attributed to this function being called instead of this one, but again, I would need to see the full trace to be sure |

Here is the full trace of eval (bs==4, with prefetch): pytorch-benchmark-dev-0_1709.1636590532626.pt.trace.json.zip |

407ab50 to

deadddc

Compare

|

@xuzhao9 has imported this pull request. If you are a Facebook employee, you can view this diff on Phabricator. |

|

@xuzhao9 after looking at the full trace, my comments from before seems to be accurate. The first slow-down is due to this part of the implementation. I have a gut-feeling that this might be due to bad hyperparameters in here, so if you could try always dispatching to this codepath it would give hints if we can easily fix this. For the second (and biggest slowdown), the problem is what I mentioned before on the mask pasting in images, and would require re-writing the function in a better way |

Thank you @fmassa! I think your explanations make sense (though I am not an expert). I think the code is in decent shape now and we are confident it represents common use cases. We can use it as a baseline to help further improve the model quality. Please help review and stamp it so that we can proceed on developing dynamic shapes. Thanks! |

fmassa

left a comment

fmassa

left a comment

There was a problem hiding this comment.

Thanks!

I've made a few comments that could simplify the implementation (by removing unused functions), and also to mention that both torchscript as well as CPU are supported.

Otherwise the rest LGTM so I'm approving this as it can be merged as is

9d43a62 to

69d3dcb

Compare

|

@xuzhao9 has imported this pull request. If you are a Facebook employee, you can view this diff on Phabricator. |

| if not self.device == "cuda": | ||

| return NotImplementedError("CPU is not supported by this model") |

There was a problem hiding this comment.

For my understanding, did you face any errors when running the code on the CPU?

There was a problem hiding this comment.

The CPU test works fine. But the JIT run seems to be failed: https://app.circleci.com/pipelines/github/pytorch/benchmark/2729/workflows/474cf2b6-f2d5-4a13-b08d-7f634886d475/jobs/2812.

There was a problem hiding this comment.

Note that during scripting, the model always returns (Losses, Detections) (as pointed out in the error message).

So you would need to change the code in the training to always return losses, detection = model(inputs), and go from there.

89ef243 to

15fb801

Compare

|

@xuzhao9 has imported this pull request. If you are a Facebook employee, you can view this diff on Phabricator. |

I re-ran the profiling with the code changes from this PR and it indeed it improves the overall figure (visible in the middle part where we are running nms). |

Add maskrcnn model from torchvision.

This is the first step towards adding dynamic shapes support for maskrcnn.