API: Optimize np.unique for integer arrays; add kind parameter#21843

API: Optimize np.unique for integer arrays; add kind parameter#21843MilesCranmer wants to merge 15 commits intonumpy:mainfrom

kind parameter#21843Conversation

adeak

left a comment

adeak

left a comment

There was a problem hiding this comment.

Thanks for the ping.

(Though I am not sure if this is the best way to do it. Comments appreciated!)

np.add.at is definitely how I'd approach this too.

Some early minor remarks below.

|

You could do normal slicing, except on a 2D array, then sum along one axis. Of course this would be N^2 memory usage... |

|

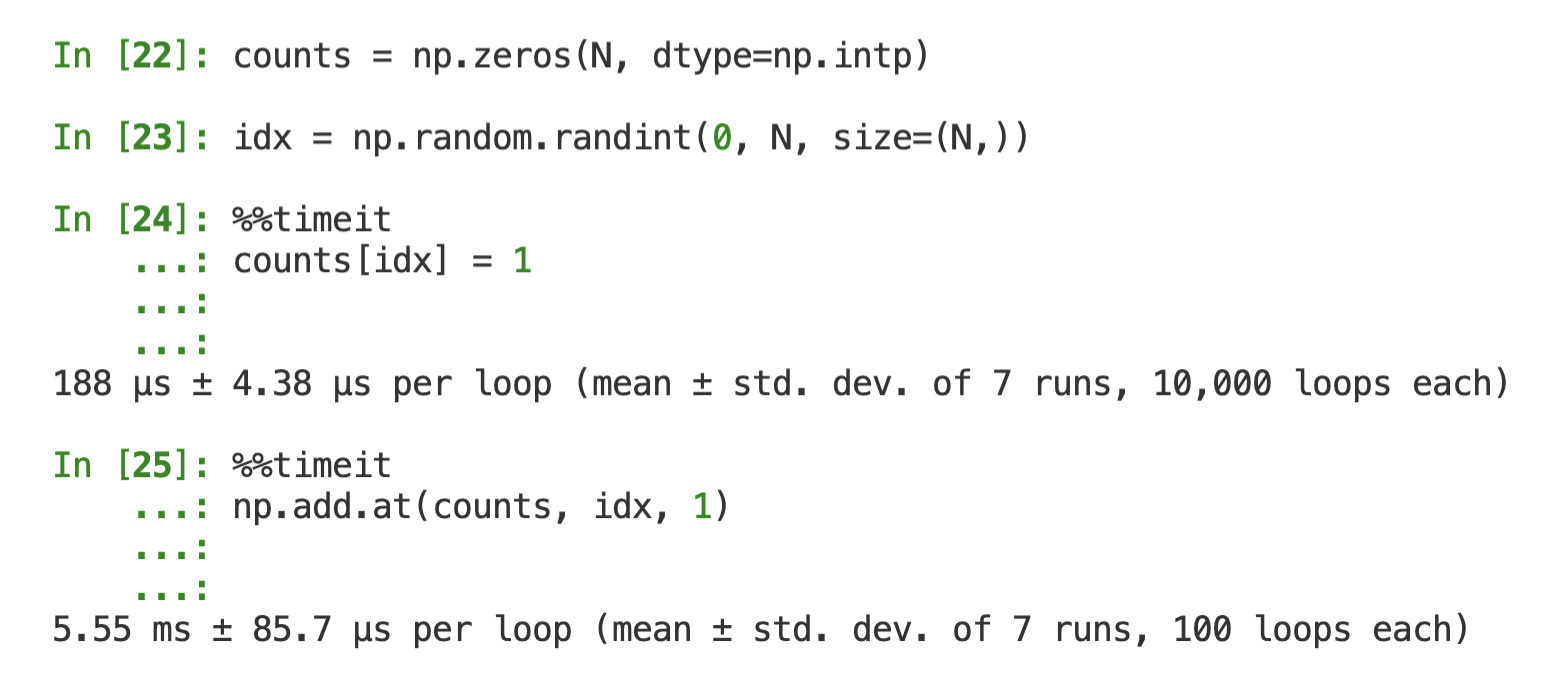

I don't know about the implementations, but I assume the "unbuffered" in "Performs unbuffered in place operation" might be relevant. Although you already lose a factor of 2 if you use Side note: it's better to post code as text rather than images, because people will be able to copy-paste them, and people using screen readers will also be able to see your code and results. Here's more or less your code with N = 100_000

counts = np.zeros(N, dtype=np.intp)

idx = np.random.randint(0, N, size=(N,))

>>> %timeit counts[idx] = 1

... %timeit counts[idx] += 1

... %timeit np.add.at(counts, idx, 1)

257 µs ± 1.89 µs per loop (mean ± std. dev. of 7 runs, 1,000 loops each)

486 µs ± 9.57 µs per loop (mean ± std. dev. of 7 runs, 1,000 loops each)

6.36 ms ± 594 µs per loop (mean ± std. dev. of 7 runs, 100 loops each) |

|

Right, will do that! Regarding the In [7]: N = 100_000

...: counts = np.zeros(N, dtype=np.intp)

...: idx = np.random.randint(0, 2, size=(N,))

In [8]: counts[idx] += 1

In [9]: np.max(counts)

Out[9]: 1So I don't think it could be used here unfortunately. |

Indeed. My point was just that when you compare the performance of |

|

Ah I see, sorry. I guess my point is that I think even 12x or 25x seems extremely large. Why is it that slow? I tested this. Here's a barebones implementation in C: https://gist.github.com/MilesCranmer/d262f468d570df4b90c8769ebdcce213 If I call this with About 64 ms. With counting turned off, i.e., About 38 ms. So there is only <2x difference... However, here is the python attempt: In [2]: x = np.random.randint(0, 10_000_000, size=(10_000_000,))

In [3]: counts = np.zeros(10_000_000, dtype=np.intp)

In [10]: %%timeit

...: counts[x] = 1

38.3 ms ± 83.8 µs per loop (mean ± std. dev. of 7 runs, 10 loops each)

In [11]: counts[:] = 0

In [12]: %%timeit

...: np.add.at(counts, x, 1)

1.14 s ± 7.21 ms per loop (mean ± std. dev. of 7 runs, 1 loop each)So the counting one is much much slower. Maybe np.add.at is written in pure Python or something? Edit: Incorrectly passed a bool so times were the same if statement. Now fixed - about a 2x difference overall. Edit 2: Moved C example to a gist |

|

Good news: I sped up the new method for Here are the updated speeds, showing In [2]: x = np.random.randint(0, 10_000_000, size=(10_000_000,))

In [3]: %%timeit

...: np.unique(x, kind='sort')

828 ms ± 5.02 ms per loop (mean ± std. dev. of 7 runs, 1 loop each)

In [4]: %%timeit

...: np.unique(x, kind='table')

150 ms ± 6.99 ms per loop (mean ± std. dev. of 7 runs, 10 loops each)

In [5]: %%timeit

...: np.unique(x, kind='sort', return_counts=True)

876 ms ± 5.7 ms per loop (mean ± std. dev. of 7 runs, 1 loop each)

In [6]: %%timeit

...: np.unique(x, kind='table', return_counts=True)

329 ms ± 13.3 ms per loop (mean ± std. dev. of 7 runs, 1 loop each) |

|

Okay I think the core API should be ready for a review. I checked through the unit-tests and many of them are for non-integral types, so it won't be as easy to |

|

Here are the results of some newly added benchmarks. This shows that one gets >12x speedups for large integer arrays, including with The only regressions look to be with very tiny arrays which are on the order of μs in difference. I think that is entirely due to the check for what method to use. If those regressions are not acceptable I could switch off the new method for small arrays, before the computation is done using ranges. before after ratio bench_lib.Unique.<test>(<array_size>, <array_range>)

[54c52f13] [158f25b3]

<v1.23.0^0> <unique-speed>

+ 7.69±0.03s 489±20ms 0.06 bench_lib.Unique.time_unique(100000000, 1778279)

+ 5.81±0.03s 447±10ms 0.08 bench_lib.Unique.time_unique(100000000, 31622)

+ 101±0.7ms 8.09±0.7ms 0.08 bench_lib.Unique.time_unique(1778279, 31622)

+ 101±1ms 9.18±1ms 0.09 bench_lib.Unique.time_unique_with_counts(1778279, 31622)

+ 1.22±0.01ms 111±40μs 0.09 bench_lib.Unique.time_unique(31622, 562)

+ 70.6±1ms 7.84±0.9ms 0.11 bench_lib.Unique.time_unique(1778279, 562)

+ 3.98±0.04s 442±10ms 0.11 bench_lib.Unique.time_unique(100000000, 562)

+ 7.74±0.06s 870±200ms 0.11 bench_lib.Unique.time_unique_with_counts(100000000, 1778279)

+ 69.6±0.4ms 8.89±0.7ms 0.13 bench_lib.Unique.time_unique_with_counts(1778279, 562)

+ 131±0.9ms 19.0±1ms 0.14 bench_lib.Unique.time_unique(1778279, 1778279)

+ 9.20±0s 1.49±0.09s 0.16 bench_lib.Unique.time_unique(100000000, 100000000)

+ 607±2μs 111±40μs 0.18 bench_lib.Unique.time_unique(31622, 10)

+ 2.37±0.04s 444±10ms 0.19 bench_lib.Unique.time_unique(100000000, 10)

+ 38.4±0.9ms 7.35±1ms 0.19 bench_lib.Unique.time_unique(1778279, 10)

+ 2.33±0.06s 462±200ms 0.20 bench_lib.Unique.time_unique_with_counts(100000000, 10)

+ 1.72±0.01ms 357±20μs 0.21 bench_lib.Unique.time_unique(31622, 31622)

+ 1.24±0.01ms 260±50μs 0.21 bench_lib.Unique.time_unique_with_counts(31622, 562)

+ 38.8±1ms 8.92±0.9ms 0.23 bench_lib.Unique.time_unique_with_counts(1778279, 10)

+ 139±3ms 38.2±3ms 0.28 bench_lib.Unique.time_unique_with_counts(1778279, 1778279)

+ 1.91±0.02ms 717±100μs 0.38 bench_lib.Unique.time_unique_with_counts(31622, 31622)

+ 630±6μs 253±40μs 0.40 bench_lib.Unique.time_unique_with_counts(31622, 10)Slow downs: - 11.1±0.1μs 20.2±0.9μs 1.82 bench_lib.Unique.time_unique(562, 562)

- 19.0±0.7μs 34.4±7μs 1.82 bench_lib.Unique.time_unique_with_counts(562, 562)

- 10.1±0.2μs 22.3±0.9μs 2.20 bench_lib.Unique.time_unique(562, 1778279)

- 14.4±0.08μs 32.2±8μs 2.24 bench_lib.Unique.time_unique_with_counts(562, 10)

- 9.92±0.2μs 22.3±0.7μs 2.25 bench_lib.Unique.time_unique(562, 31622)

- 10.7±0.2μs 24.3±7μs 2.27 bench_lib.Unique.time_unique_with_counts(10, 10)

- 9.75±0.07μs 22.3±0.6μs 2.29 bench_lib.Unique.time_unique(562, 100000000)

- 8.22±0.1μs 19.1±1μs 2.32 bench_lib.Unique.time_unique(562, 10)

- 18.2±0.6μs 49.0±20μs 2.69 bench_lib.Unique.time_unique_with_counts(562, 1778279)

- 10.5±0.1μs 29.7±10μs 2.83 bench_lib.Unique.time_unique_with_counts(10, 31622)

- 16.9±0.1μs 49.1±10μs 2.90 bench_lib.Unique.time_unique_with_counts(562, 31622)

- 17.4±0.1μs 52.1±20μs 2.99 bench_lib.Unique.time_unique_with_counts(562, 100000000)

- 10.7±0.1μs 32.4±8μs 3.04 bench_lib.Unique.time_unique_with_counts(10, 100000000)

- 10.4±0.09μs 33.0±10μs 3.18 bench_lib.Unique.time_unique_with_counts(10, 562)

- 10.6±0.1μs 37.5±10μs 3.55 bench_lib.Unique.time_unique_with_counts(10, 1778279)

- 4.43±0.04μs 16.3±1μs 3.67 bench_lib.Unique.time_unique(10, 31622)

- 4.46±0.1μs 16.7±2μs 3.74 bench_lib.Unique.time_unique(10, 100000000)

- 4.33±0.08μs 17.1±1μs 3.95 bench_lib.Unique.time_unique(10, 1778279)

- 4.41±0.03μs 17.5±0.6μs 3.98 bench_lib.Unique.time_unique(10, 562)

- 4.50±0.06μs 18.1±5μs 4.02 bench_lib.Unique.time_unique(10, 10) |

|

Posted to the mailing list. |

I have not seen any email, maybe you got the wrong thing? This is the right one: https://mail.python.org/mailman3/lists/numpy-discussion.python.org/ |

|

Ah, I see, sorry. It said "Your message to the NumPy-Discussion mailing-list was rejected for the following Will do it correctly this time... |

This PR fixes the issue discussed on #12065 and #21843 where 'timedelta64' was noted to be a subtype of numpy.integer. This in principle should detect any cases where int(np.min(ar2)) fails. This PR also adds unittests for these. * TST: Create in1d test for timedelta input * MAINT: fix in1d for timedelta input * TST: in1d raise ValueError for timedelta input * MAINT: Clean up type checking for isin kind="table" * TST: Add test for mixed boolean/integer in1d * MAINT: Increase readability of in1d type checking * STY: Apply small code style tweaks This is probably really mainly my personal opinion... Co-authored-by: Sebastian Berg <[email protected]>

67da234 to

837101a

Compare

|

Hey @seberg! It's been a while but I realized we never merged this yet. I have a bit of time so I was wondering if we could push on this PR and try to get it merged? I just updated my branch to Recent update: there was an issue with Cheers, |

This commit implements the same lookup table method from #12065 for

np.unique.np.uniqueis, likenp.isin, based on sorting and array comparison. These methods, though more generic, can be greatly sped up for integer dtypes with a lookup table. For unique, this works as follows:calloca lookup table with each element corresponding to an integer:x = np.zeros(ar_range)1where a number exists in the array:x[ar - ar_min] = 11:return np.nonzero(x) + ar_minA similar strategy can be used for

return_counts=True. Instead of filling the lookup table with1, you can use the table to count, with, for example,which will count the occurrences of each integer. (Though I am not sure if this is the best way to do it. Comments appreciated!)

I can see speedups similar to found with

np.isinfor large integral arrays:Comments very much appreciated!

cc'ing everybody recently active in the

isinPR: @seberg @WarrenWeckesser @hameerabbasi @shoyer @adeakMailing list discussion: https://mail.python.org/archives/list/[email protected]/thread/3SJTDDF7D4VLZIUPQKHJMSYU2RVY3MHH/

Edit 1: Improved speed for

return_counts=True.Edit 2: No longer a work in progress.

Edit 3: Added mailing list link