Real-time monitoring and performance troubleshooting for Apache Spark

Spark Dashboard is a lightweight monitoring solution for Apache Spark clusters. It visualizes Spark and system metrics in Grafana, helping users track trends, detect anomalies, and troubleshoot performance issues faster.

-

Real-time monitoring

Visualize Spark and system metrics in Grafana, including CPU, memory, active tasks, and I/O, to quickly spot trends and anomalies. -

Easy deployment

Run locally with a container or deploy on Kubernetes with Helm. -

Broad compatibility

Supports Apache Spark 3.x and 4.x across Hadoop, Kubernetes, and Spark Standalone environments.

- Architecture

- Deploying Spark Dashboard v2

- Notes on Spark Connect

- Examples and getting started

- Legacy implementation (v1)

- Advanced configuration and notes

- Watch the Spark Dashboard demo and tutorial

- Notes on Spark Dashboard

- Building an Apache Spark Performance Lab

- Blog post on Spark Dashboard

- Talk at Data + AI Summit 2021, slides

- sparkMeasure, a tool for troubleshooting Apache Spark workloads

- TPCDS_PySpark, a TPC-DS workload generator for Apache Spark

Main author and contact: [email protected]

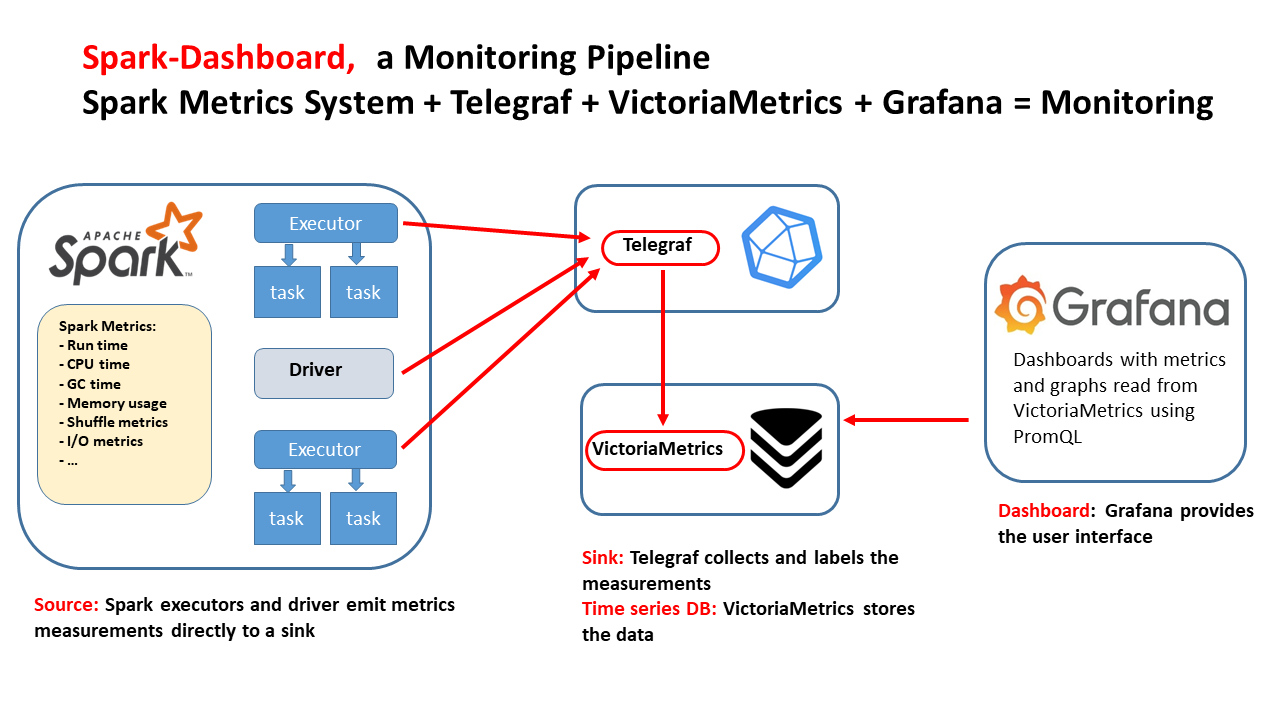

Spark Dashboard provides an end-to-end monitoring pipeline for Apache Spark using open-source components. It is designed to deliver real-time visibility into Spark cluster health and performance, from metric generation to visualization.

-

Apache Spark metrics

Apache Spark generates detailed performance metrics through its metrics system. Both the driver and executors emit metrics such as runtime, CPU usage, garbage collection time, memory consumption, shuffle activity, and I/O statistics in Graphite format. -

Telegraf

Telegraf acts as the collection agent. It ingests Spark metrics, enriches them with labels and tags, and forwards them to the storage backend. -

VictoriaMetrics

VictoriaMetrics stores the collected metrics efficiently as time-series data, making it well suited for both real-time monitoring and historical analysis. -

Grafana

Grafana provides the visualization layer. It queries VictoriaMetrics using PromQL or MetricsQL and displays interactive dashboards for observing trends and identifying bottlenecks.

Together, these components provide a scalable monitoring solution for Apache Spark.

The recommended deployment method is Spark Dashboard v2.

dockerfiles_v2/andcharts_v2/contain the current v2 implementation.- Legacy v1 assets are preserved under

legacy/dockerfiles_v1/andlegacy/charts_v1/.

Follow these steps to deploy Spark Dashboard v2 with Docker or Podman.

The container image includes VictoriaMetrics for metrics storage and Grafana for visualization.

Using Docker

docker run -p 3000:3000 -p 2003:2003 -d lucacanali/spark-dashboardUsing Podman

podman run -p 3000:3000 -p 2003:2003 -d lucacanali/spark-dashboardTo send metrics to Spark Dashboard, configure Spark to export metrics to the Graphite endpoint exposed by the container.

Edit metrics.properties in $SPARK_CONF_DIR and add:

# Configure Graphite sink for Spark metrics

*.sink.graphite.host=localhost

*.sink.graphite.port=2003

*.sink.graphite.period=10

*.sink.graphite.unit=seconds

*.sink.graphite.prefix=lucatest

# Enable JVM metrics collection

*.source.jvm.class=org.apache.spark.metrics.source.JvmSourceOptionally, enable additional Spark metric sources in spark-defaults.conf or your launch command:

--conf spark.metrics.staticSources.enabled=true

--conf spark.metrics.appStatusSource.enabled=trueYou can also pass the configuration directly when starting Spark:

VICTORIAMETRICS_ENDPOINT=$(hostname)

bin/spark-shell \

--conf "spark.metrics.conf.*.sink.graphite.class=org.apache.spark.metrics.sink.GraphiteSink" \

--conf "spark.metrics.conf.*.sink.graphite.host=${VICTORIAMETRICS_ENDPOINT}" \

--conf "spark.metrics.conf.*.sink.graphite.port=2003" \

--conf "spark.metrics.conf.*.sink.graphite.period=10" \

--conf "spark.metrics.conf.*.sink.graphite.unit=seconds" \

--conf "spark.metrics.conf.*.sink.graphite.prefix=lucatest" \

--conf "spark.metrics.conf.*.source.jvm.class=org.apache.spark.metrics.source.JvmSource" \

--conf "spark.metrics.staticSources.enabled=true" \

--conf "spark.metrics.appStatusSource.enabled=true"To collect and display process tree memory details, also add:

--conf spark.executor.processTreeMetrics.enabled=trueOnce the container is running and Spark is configured to export metrics:

- Open Grafana at

http://localhost:3000 - Default credentials:

- User:

admin - Password:

admin

- User:

The bundled dashboard, Spark_Perf_Dashboard_v04_promQL, displays key Spark metrics such as runtime, CPU, I/O, shuffle activity, and task counts, along with detailed time-series graphs.

Ensure that a Spark application is running and configured to send metrics, otherwise no data will appear in Grafana.

For test workloads, you can use TPCDS_PySpark.

By default, VictoriaMetrics data is not preserved across container restarts. To keep historical metrics, mount a persistent volume.

Example using a local directory:

mkdir metrics_data

docker run --network=host \

-v ./metrics_data:/victoria-metrics-data \

-d lucacanali/spark-dashboard:v02The charts_v2/ directory contains the Helm chart for Spark Dashboard v2.

If your cluster does not provide a default storage class:

helm install spark-dashboard ./charts_v2 --set persistence.enabled=falseIf your cluster provides a suitable storage class and you want persistence:

helm install spark-dashboard ./charts_v2 --set persistence.storageClass=<your-storage-class>Check deployment status:

kubectl get pods -l app.kubernetes.io/name=spark-dashboard-v2

kubectl get svc spark-dashboard-v2To expose Grafana and Graphite externally using a LoadBalancer service:

helm install spark-dashboard ./charts_v2 \

--set persistence.enabled=false \

--set service.type=LoadBalancerThis exposes:

- Grafana on port

3000 - Graphite on port

2003

VictoriaMetrics port 8428 is not exposed on the load balancer by default.

To expose VictoriaMetrics as well:

helm install spark-dashboard ./charts_v2 \

--set persistence.enabled=false \

--set service.type=LoadBalancer \

--set service.victoriametrics.exposeOnLoadBalancer=trueIf Spark runs inside the cluster, use the service DNS name as the Graphite endpoint:

spark-dashboard-v2:2003

If Spark runs outside the cluster, wait for an external IP:

kubectl get svc spark-dashboard-v2 -wThen use:

<external-ip>:2003

Grafana will be available at:

http://<external-ip>:3000

For testing, you can also use port-forwarding:

kubectl port-forward svc/spark-dashboard-v2 3000:3000 2003:2003Then open:

http://localhost:3000

If Spark runs on the same machine as the port-forward, use localhost:2003 as the Graphite sink endpoint.

If pods remain in Pending, check for storage issues:

kubectl get pvc

kubectl describe pod -l app.kubernetes.io/name=spark-dashboard-v2

kubectl get storageclassIf needed, reinstall without persistence:

helm uninstall spark-dashboard

helm install spark-dashboard ./charts_v2 --set persistence.enabled=falseIf the service exists but external access fails, verify the in-cluster path first:

kubectl get endpoints spark-dashboard-v2

kubectl run netcheck --rm -it --image=busybox:1.36 --restart=Never -- shFrom the debug shell:

nc -vz spark-dashboard-v2 3000

nc -vz spark-dashboard-v2 2003If those checks succeed, the chart is working and the remaining issue is external networking, firewall rules, or service exposure.

If EXTERNAL-IP stays pending for a LoadBalancer service, your cluster likely does not have load balancer integration configured. In that case, use NodePort, deploy a solution such as MetalLB, or use the external exposure mechanism supported by your Kubernetes environment.

The Extended Spark Dashboard adds OS- and storage-level observability on top of standard Spark metrics. It uses Spark Plugins to collect additional metrics and stores them in the same VictoriaMetrics backend.

The extended dashboard adds three groups of graphs:

-

CGroup metrics

Useful for Spark running on Kubernetes. -

Cloud storage metrics

Covers storage backends such as S3A, GCS, WASB, and similar systems. -

Advanced HDFS statistics

Provides deeper visibility into HDFS activity and performance.

Add the following to your Spark configuration:

--conf spark.jars.packages=ch.cern.sparkmeasure:spark-plugins_2.13:0.4

--conf spark.plugins=ch.cern.HDFSMetrics,ch.cern.CgroupMetrics,ch.cern.CloudFSMetricsIn Grafana, select:

Spark_Perf_Dashboard_v04_PromQL_with_SparkPlugins

This dashboard includes additional graphs for OS and storage metrics.

Spark Connect allows a lightweight Spark client to connect remotely to a Spark cluster.

When using Spark Connect, Spark Dashboard must run on the Spark Connect server, not on the client.

- Start the Spark Dashboard container.

- Edit the

metrics.propertiesfile in the Spark Connectconfdirectory as described above. - Start Spark Connect:

sbin/start-connect-server.shMetrics from Spark Connect will then be sent to Spark Dashboard and visualized in Grafana.

See example graphs here:

You can use TPCDS_PySpark to generate a TPC-DS workload and test the dashboard.

You can run this locally or in the cloud, for example with GitHub Codespaces:

# Install dependencies

pip install pyspark

pip install sparkmeasure

pip install tpcds_pyspark

# Download test data

wget https://sparkdltrigger.web.cern.ch/sparkdltrigger/TPCDS/tpcds_10.zip

unzip -q tpcds_10.zip

# 1. Run a minimal test

tpcds_pyspark_run.py -d tpcds_10 -n 1 -r 1 --queries q1,q2

# 2. Start the dashboard

docker run -p 2003:2003 -p 3000:3000 -d lucacanali/spark-dashboard

# 3. Run the workload and send metrics to the dashboard

TPCDS_PYSPARK=$(which tpcds_pyspark_run.py)

spark-submit --master local[*] \

--conf "spark.metrics.conf.*.sink.graphite.class=org.apache.spark.metrics.sink.GraphiteSink" \

--conf "spark.metrics.conf.*.sink.graphite.host=localhost" \

--conf "spark.metrics.conf.*.sink.graphite.port=2003" \

--conf "spark.metrics.conf.*.sink.graphite.period=10" \

--conf "spark.metrics.conf.*.sink.graphite.unit=seconds" \

--conf "spark.metrics.conf.*.sink.graphite.prefix=lucatest" \

--conf "spark.metrics.conf.*.source.jvm.class=org.apache.spark.metrics.source.JvmSource" \

--conf "spark.metrics.staticSources.enabled=true" \

--conf "spark.metrics.appStatusSource.enabled=true" \

--conf spark.driver.memory=4g \

--conf spark.log.level=error \

--packages ch.cern.sparkmeasure:spark-measure_2.13:0.25 \

$TPCDS_PYSPARK -d tpcds_10Then:

- open

http://localhost:3000 - log in with

admin/admin - optionally open the Spark UI at

http://localhost:4040

The dashboard is more informative when Spark runs on cluster resources rather than only in local mode.

Example on a YARN cluster:

TPCDS_PYSPARK=$(which tpcds_pyspark_run.py)

spark-submit --master yarn \

--conf spark.log.level=error \

--conf spark.executor.cores=8 \

--conf spark.executor.memory=64g \

--conf spark.driver.memory=16g \

--conf spark.driver.extraClassPath=tpcds_pyspark/spark-measure_2.13-0.25.jar \

--conf spark.dynamicAllocation.enabled=false \

--conf spark.executor.instances=32 \

--conf spark.sql.shuffle.partitions=512 \

$TPCDS_PYSPARK -d hdfs://<PATH>/tpcds_10000_parquet_1.13.1Example on Kubernetes with S3 storage and Spark plugins:

TPCDS_PYSPARK=$(which tpcds_pyspark_run.py)

spark-submit --master k8s://https://xxx.xxx.xxx.xxx:6443 \

--conf spark.kubernetes.container.image=<URL>/spark:v3.5.1 \

--conf spark.kubernetes.namespace=xxx \

--conf spark.eventLog.enabled=false \

--conf spark.task.maxDirectResultSize=2000000000 \

--conf spark.shuffle.service.enabled=false \

--conf spark.executor.cores=8 \

--conf spark.executor.memory=32g \

--conf spark.driver.memory=4g \

--packages org.apache.hadoop:hadoop-aws:3.3.4,ch.cern.sparkmeasure:spark-measure_2.13:0.25,ch.cern.sparkmeasure:spark-plugins_2.13:0.4 \

--conf spark.plugins=ch.cern.HDFSMetrics,ch.cern.CgroupMetrics,ch.cern.CloudFSMetrics \

--conf spark.cernSparkPlugin.cloudFsName=s3a \

--conf spark.dynamicAllocation.enabled=false \

--conf spark.executor.instances=4 \

--conf spark.hadoop.fs.s3a.secret.key=$SECRET_KEY \

--conf spark.hadoop.fs.s3a.access.key=$ACCESS_KEY \

--conf spark.hadoop.fs.s3a.endpoint="https://s3.cern.ch" \

--conf spark.hadoop.fs.s3a.impl="org.apache.hadoop.fs.s3AFileSystem" \

--conf spark.executor.metrics.fileSystemSchemes="file,hdfs,s3a" \

--conf spark.hadoop.fs.s3a.fast.upload=true \

--conf spark.hadoop.fs.s3a.path.style.access=true \

--conf spark.hadoop.fs.s3a.list.version=1 \

$TPCDS_PYSPARK -d s3a://luca/tpcds_100Spark Dashboard v1 is the original implementation and uses InfluxDB as the time-series backend.

Architecture reference:

Legacy assets are stored under:

legacy/dockerfiles_v1/legacy/charts_v1/

See also:

docker run -p 3000:3000 -p 2003:2003 -d lucacanali/spark-dashboard:v01- Port

2003is the Graphite ingestion endpoint - Port

3000is Grafana

More options, including persistence across restarts:

helm install spark-dashboard https://github.com/cerndb/spark-dashboard/raw/master/charts/spark-dashboard-0.3.0.tgzMore details:

Optionally, you can add annotations for query, job, and stage start and end times in the v1 dashboard.

INFLUXDB_HTTP_ENDPOINT="http://$(hostname):8086"

<spark-submit config>

--packages ch.cern.sparkmeasure:spark-measure_2.13:0.25 \

--conf spark.sparkmeasure.influxdbURL=$INFLUXDB_HTTP_ENDPOINT \

--conf spark.extraListeners=ch.cern.sparkmeasure.InfluxDBSink- More details and alternative configurations: Spark Dashboard notes

- The dashboard can be used with Spark on Kubernetes, YARN, Standalone, or local mode

- For Spark local mode, use Spark 3.1 or newer; see SPARK-31711

- Telegraf uses port

2003for Graphite ingestion and port8428for VictoriaMetrics - In v1, InfluxDB uses port

2003for Graphite ingestion and port8086for HTTP access when using--network=host - Ensure these endpoints are reachable from the Spark driver and executors

Find the InfluxDB service IP with:

kubectl get service spark-dashboard-influxExample service DNS name:

spark-dashboard-influx.default.svc.cluster.local

- The project includes example dashboards, but only a subset of available metrics is visualized by default

- For the full list of Spark metrics, see the Spark metrics documentation

- To add new dashboards, place them in the appropriate

grafana_dashboardsfolder and rebuild the container image or repackage the Helm chart - With Helm, updating the chart is enough to load dashboards through ConfigMaps

- Automatic persistence of manual Grafana edits is not currently supported