[Issue 84]fix cpu and mem in docker for Linux#81

Conversation

caigy

left a comment

caigy

left a comment

There was a problem hiding this comment.

Also link the PR with related issue and rename the title starting with '[ISSUE xx]'

images/namesrv/alpine/Dockerfile

Outdated

| curl https://archive.apache.org/dist/rocketmq/${ROCKETMQ_VERSION}/rocketmq-all-${ROCKETMQ_VERSION}-bin-release.zip -o rocketmq.zip; \ | ||

| curl https://archive.apache.org/dist/rocketmq/${ROCKETMQ_VERSION}/rocketmq-all-${ROCKETMQ_VERSION}-bin-release.zip.asc -o rocketmq.zip.asc; \ | ||

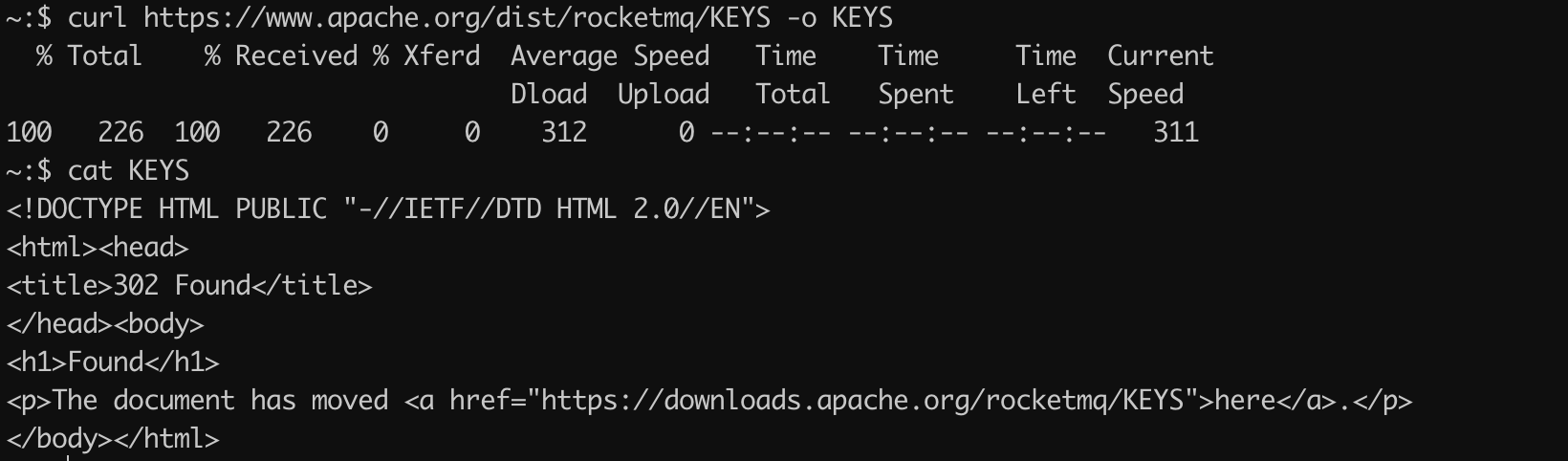

| curl https://www.apache.org/dist/rocketmq/KEYS -o KEYS; \ | ||

| curl https://downloads.apache.org/rocketmq/KEYS -o KEYS; \ |

There was a problem hiding this comment.

Could you provide the reason to change it? It seems that this modification is not related to the title

There was a problem hiding this comment.

Ok, this is indeed a problem. I think you can use curl -L to solve the redirect problem, which is the same as that in rocketmq-docker repo.

| podAntiAffinity = &corev1.PodAntiAffinity{ | ||

| RequiredDuringSchedulingIgnoredDuringExecution: []corev1.PodAffinityTerm{corev1.PodAffinityTerm{ | ||

| TopologyKey: "kubernetes.io/hostname", | ||

| LabelSelector: &metav1.LabelSelector{ | ||

| MatchLabels: ls, | ||

| }, | ||

| }}, | ||

| PreferredDuringSchedulingIgnoredDuringExecution: []corev1.WeightedPodAffinityTerm{corev1.WeightedPodAffinityTerm{ | ||

| Weight: 100, | ||

| PodAffinityTerm: corev1.PodAffinityTerm{ | ||

| TopologyKey: "kubernetes.io/hostname", | ||

| LabelSelector: &metav1.LabelSelector{ | ||

| MatchLabels: labelsForBroker(broker.Name), | ||

| }, | ||

| }, | ||

| }}, | ||

| } |

There was a problem hiding this comment.

I have no idea why the nodeAffinity is needed. But the podAntiAffinity is sure needed. Because I don't want to see that the whole cluster(maybe multi master borker) become unwritable when all the master broker are assigned to the same node and the node is down. This situation will be worse if the kubernetes can't new the master pod in time.

There was a problem hiding this comment.

You'b better open another PR to do it, because this is obviously unrelated to this issue.

| system_memory_in_mb=$(($(cat /sys/fs/cgroup/memory/memory.limit_in_bytes)/1024/1024)) | ||

| system_cpu_cores=$(($(cat /sys/fs/cgroup/cpu/cpu.cfs_quota_us)/100000)) |

There was a problem hiding this comment.

It would be better to check memory and cpu from cgroup are smaller than system memory and cpu, because resource limits may not be set.

There was a problem hiding this comment.

This is better,and I fixed it.Although i think it's not correct if anone use it in the kubernetes without memory limit.

This reverts commit 5415810.

| system_memory_in_mb=$system_memory_in_mb_in_docker | ||

| fi | ||

| system_cpu_cores=`egrep -c 'processor([[:space:]]+):.*' /proc/cpuinfo` | ||

| system_cpu_cores_in_docker=$(($(cat /sys/fs/cgroup/cpu/cpu.cfs_quota_us)/100000)) |

There was a problem hiding this comment.

Is 100000 read from /sys/fs/cgroup/cpu/cpu.cfs_period_us ?

|

@overstep123 Pls link the PR to related issue and rename the title starting with '[ISSUE xx]'. |

Uh oh!

There was an error while loading. Please reload this page.