Workaround OOM issue in classification 2D integration tests#3949

Workaround OOM issue in classification 2D integration tests#3949wyli merged 8 commits intoProject-MONAI:devfrom

Conversation

merge master

merge master

merge master

merge master

merge master

|

/black |

|

/build |

|

/integration-test |

docker 21.02 is with cuda 11.2, which version of pytorch 1.11 did you install? (the ci still doesn't pass https://github.com/Project-MONAI/MONAI/actions/runs/1990631879) |

Hi @wyli , I deleted the torch in 21.02 and used Current CI error is not OOM anymore, it's because the answer is changed, I will update them soon, thanks for pointing: Thanks. |

|

I am testing slightly older dockers, maybe we can temporarily roll back to an older docker to avoid changing test cases. Thanks. |

|

I tested below PyTorch dockers with MONAI dev code and the built-in PyTorch:

I plan to temporarily roll back our MONAI docker to PyTorch 21.10, do you guys have any concerns? Thanks in advance. |

Signed-off-by: Nic Ma <[email protected]>

e50ac48 to

d061868

Compare

|

Hi @wyli , I think we can still run regular GPU tests with the latest dockers, so I only changed Thanks. |

|

/black |

|

/build |

|

/integration-test |

|

/build |

|

/integration-test |

|

/build |

…roject-MONAI#3949)" This reverts commit 6ea9742. Signed-off-by: Wenqi Li <[email protected]>

* fixes multiprocessing memory issue Signed-off-by: Wenqi Li <[email protected]> * Revert "Workaround OOM issue in classification 2D integration tests (#3949)" This reverts commit 6ea9742. Signed-off-by: Wenqi Li <[email protected]> * update tests Signed-off-by: Wenqi Li <[email protected]> * [pre-commit.ci] auto fixes from pre-commit.com hooks for more information, see https://pre-commit.ci * fixes typo Signed-off-by: Wenqi Li <[email protected]> Co-authored-by: pre-commit-ci[bot] <66853113+pre-commit-ci[bot]@users.noreply.github.com>

Description

During debugging #3934 , we found that the

test_integration_classification_2dintegration test uses more than 14GB memory which may cause OOM in CI server sometimes.Then I tried to do lots experiments to identify the root cause with this command:

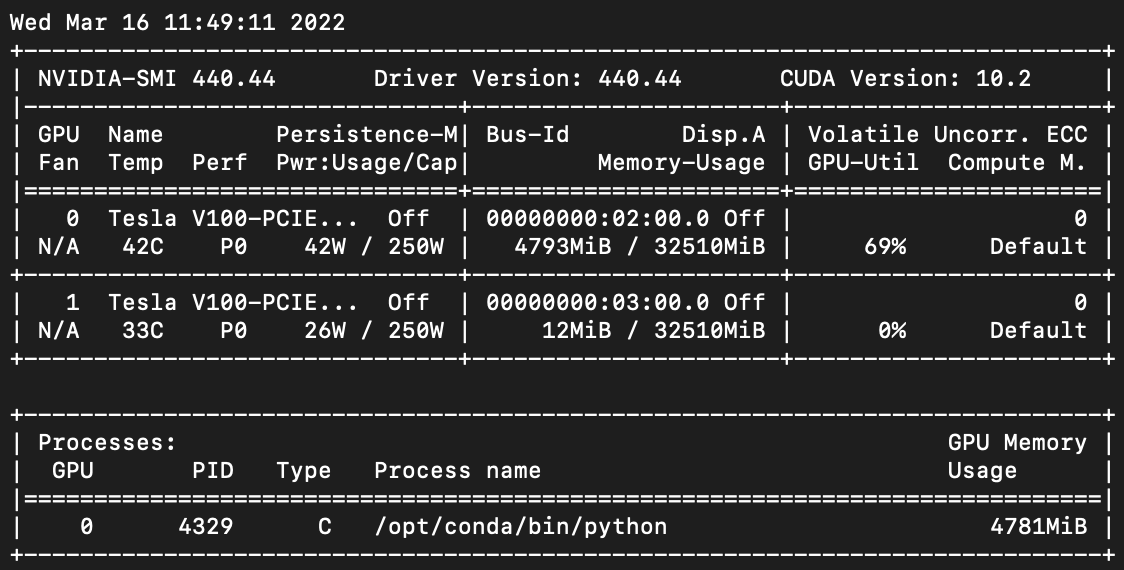

export CUDA_VISIBLE_DEVICES=$(python -m tests.utils); python -m tests.test_integration_classification_2dSo I think it seems the issue is not related to MONAI or PyTorch code, maybe some CUDA caching logic issue?

Then I tried to analyze the integration test and found that: in Case 1 when running the training, every process of DataLoader will occupy 839MB GPU memory and we set

num_workers=10in the test, the total GPU memory is 14GB:But in Case 2 (21.02 docker), only the main process occupies GPU memory, so it's only 4GB:

I also tried to change other args of DataLoader, like:

pin_memory,persistent_workers, etc. it doesn't change anything.So this PR changed to

num_workersfrom 10 to 1, it will much less memory and will fix the OOM issue in CI server.Status

Ready

Types of changes

./runtests.sh -f -u --net --coverage../runtests.sh --quick --unittests --disttests.make htmlcommand in thedocs/folder.