Improve the observability of INSERT on distributed table#41034

Improve the observability of INSERT on distributed table#41034rschu1ze merged 18 commits intoClickHouse:masterfrom

Conversation

This comment was marked as outdated.

This comment was marked as outdated.

This comment was marked as outdated.

This comment was marked as outdated.

tests/queries/0_stateless/02417_opentelemetry_insert_on_distributed_table.sh

Outdated

Show resolved

Hide resolved

This comment was marked as outdated.

This comment was marked as outdated.

Signed-off-by: Frank Chen <[email protected]>

This comment was marked as outdated.

This comment was marked as outdated.

tests/queries/0_stateless/02417_opentelemetry_insert_on_distributed_table.sh

Outdated

Show resolved

Hide resolved

tests/queries/0_stateless/02417_opentelemetry_insert_on_distributed_table.sh

Outdated

Show resolved

Hide resolved

tests/queries/0_stateless/02417_opentelemetry_insert_on_distributed_table.sh

Outdated

Show resolved

Hide resolved

tests/queries/0_stateless/02417_opentelemetry_insert_on_distributed_table.sh

Outdated

Show resolved

Hide resolved

This comment was marked as outdated.

This comment was marked as outdated.

Co-authored-by: Azat Khuzhin <[email protected]>

Thanks for the explanation. I have not dived into the details of test. |

This is the cluster that is used - https://github.com/ClickHouse/ClickHouse/blob/master/programs/server/config.xml#L827-L840

You can simply use

That said that there will be one local node and one remote, just what you test needs. |

This comment was marked as outdated.

This comment was marked as outdated.

Signed-off-by: Frank Chen <[email protected]>

Signed-off-by: Frank Chen <[email protected]>

tests/queries/0_stateless/02417_opentelemetry_insert_on_distributed_table.sh

Outdated

Show resolved

Hide resolved

| # | ||

| ${CLICKHOUSE_CLIENT} -nq " | ||

| DROP TABLE IF EXISTS ${CLICKHOUSE_DATABASE}.dist_opentelemetry; | ||

| DROP TABLE IF EXISTS ${CLICKHOUSE_DATABASE}.local_opentelemetry; |

There was a problem hiding this comment.

You can omit CLICKHOUSE_DATABASE in CREATE/DROP, though it is minor.

There was a problem hiding this comment.

Actually, when I remove the database from the the CREATE/DROP, the tables are not correctly created under the ${CLICKHOUSE_DATABASE} database but under default database. We should add --database to the command so that correct database will be applied, so I didn't make change to these queries.

There was a problem hiding this comment.

This is done automatically by shell_config.sh -

ClickHouse/tests/queries/shell_config.sh

Line 21 in 9c5f9f1

So this should work, but like I said this is minor, and if you don't have any other changes you can ignore this. But if you have something else, then please apply this suggestion.

Signed-off-by: Frank Chen <[email protected]>

Signed-off-by: Frank Chen <[email protected]>

Hi @azat Last question, how does the CI setup the ClickHouse instance to listen on both 127.0.0.1 and 127.0.0.2? I created a loopback 127.0.0.2 by using |

So listening on

You don't need to create anything this should out of the box.

Yes, this is because it finds 127.2 on some interface locally and treat it as localhost because of this - ClickHouse/src/Common/isLocalAddress.cpp Lines 114 to 115 in 9c5f9f1 If you have macos setup, then I would use |

Ah, I almost forget we have this setting |

Signed-off-by: Frank Chen <[email protected]>

Signed-off-by: Frank Chen <[email protected]>

Signed-off-by: Frank Chen <[email protected]>

Signed-off-by: Frank Chen <[email protected]>

|

Looks like the building failure is not related to changes in this PR. |

|

Agree. Also the stress test failure looks unrelated to me. Should be good to merge once the remaining tests finished. |

| thread_trace_context->root_span.addAttribute("clickhouse.distributed", distributed_header.distributed_table); | ||

| thread_trace_context->root_span.addAttribute("clickhouse.remote", distributed_header.remote_table); | ||

| thread_trace_context->root_span.addAttribute("clickhouse.rows", distributed_header.rows); | ||

| thread_trace_context->root_span.addAttribute("clickhouse.bytes", distributed_header.bytes); |

There was a problem hiding this comment.

You forgot to adjust the code path for distributed_directory_monitor_batch_inserts - processFilesWithBatching, see 00e3c21

There was a problem hiding this comment.

Also I was wondering, maybe it worth to use clickhouse.distributed_send. namespace for the metrics?

Changelog category (leave one):

Changelog entry (a user-readable short description of the changes that goes to CHANGELOG.md):

Improve the observability of INSERT on distributed table

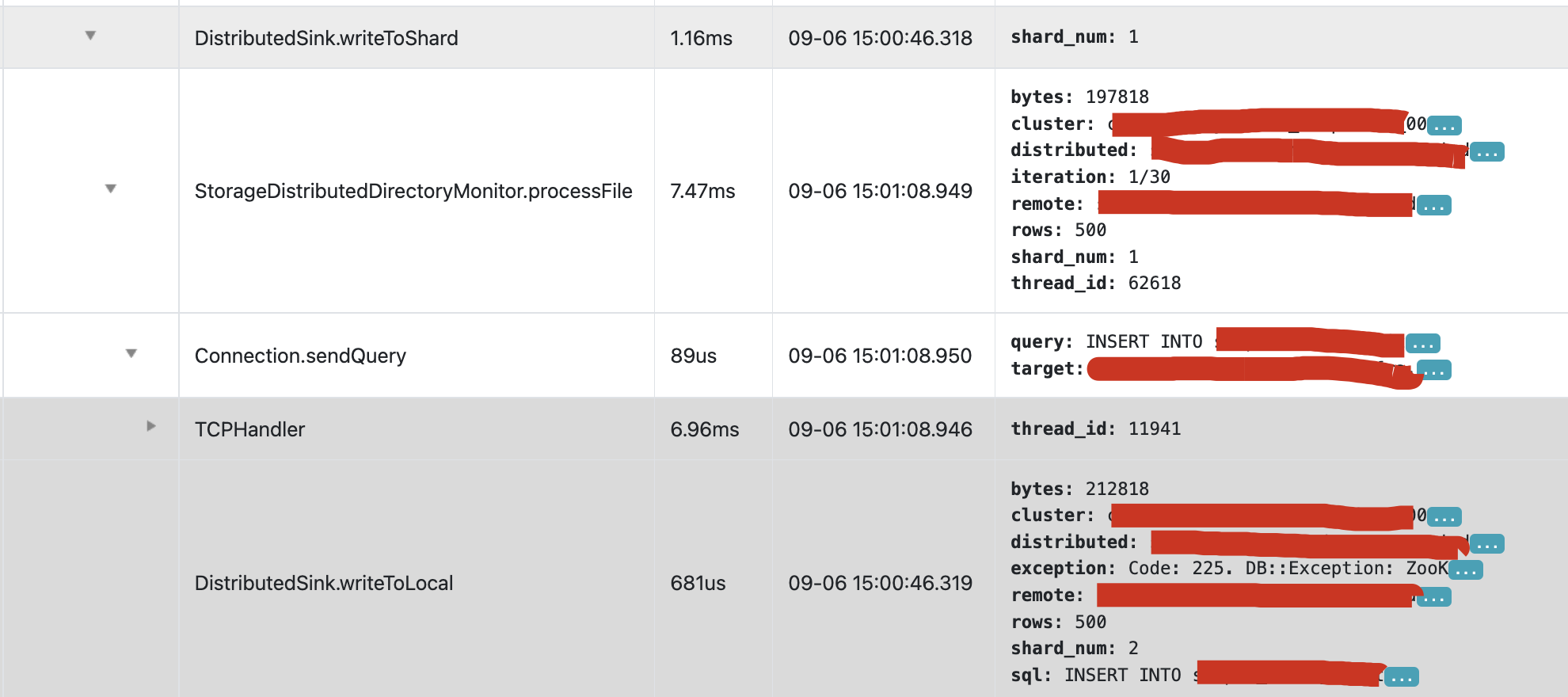

Current INSERT on distributed is executed asynchronously. If the ASYNC insert from distributed table to local table fails, we don't know about that unless we check the text logs on the server. For a managed database service, it's not a good way for users to check such errors.

This PR first add more dimensions/metrics to the header of temp file that are going to be sent to remote tables, including:

And then above information is written into opentelemetry span logs if opentelemetry tracing is enabled.

From the log, it's very clear that how the raw INSERT is splitted into different INSERTs on different shards.

The reason that we write these info to span logs is that we can easily extract these dimension/metrics from span logs with some existing tools, and then visualize these metrics for users.

Following is a demonstration that how we do this based on above information in the span logs.