-

Notifications

You must be signed in to change notification settings - Fork 8.3k

Why does Clickhouse's memory usage jitter when using Disk S3? #38839

Copy link

Copy link

Closed as not planned

Labels

questionQuestion?Question?st-need-infoWe need extra data to continue (waiting for response). Either some details or a repro of the issue.We need extra data to continue (waiting for response). Either some details or a repro of the issue.

Description

Version 22.4.4.7

My storage_configuration is:

<storage_configuration>

<disks>

<s3>

<type>s3</type>

<endpoint>http://s3.endpoint/clickhouse-bucket1/data/</endpoint>

<access_key_id>access_key_id</access_key_id>

<secret_access_key>secret_access_key</secret_access_key>

<connect_timeout_ms>120000</connect_timeout_ms>

<request_timeout_ms>120000</request_timeout_ms>

<skip_access_check>true</skip_access_check>

</s3>

</disks>

<policies>

<s3>

<volumes>

<s3>

<disk>s3</disk>

</s3>

</volumes>

</s3>

<ssd_s3>

<volumes>

<ssd>

<disk>default</disk>

</ssd>

<s3>

<disk>s3</disk>

</s3>

</volumes>

<move_factor>0.2</move_factor>

</ssd_s3>

</policies>

</storage_configuration>

And my table settings is:

CREATE TABLE database.table_local

(

`upstream_status` Nullable(Int32),

`upstream_addr` Nullable(String),

`request_time` Nullable(Float32),

`remote_user` Nullable(String),

`upstream_response_time` Nullable(Float32),

`local_time` DateTime64(3, 'America/Danmarkshavn')

)

ENGINE = MergeTree

PRIMARY KEY local_time

ORDER BY local_time

TTL toDateTime(local_time) + toIntervalWeek(2)

SETTINGS index_granularity = 8192, storage_policy = 'ssd_s3'

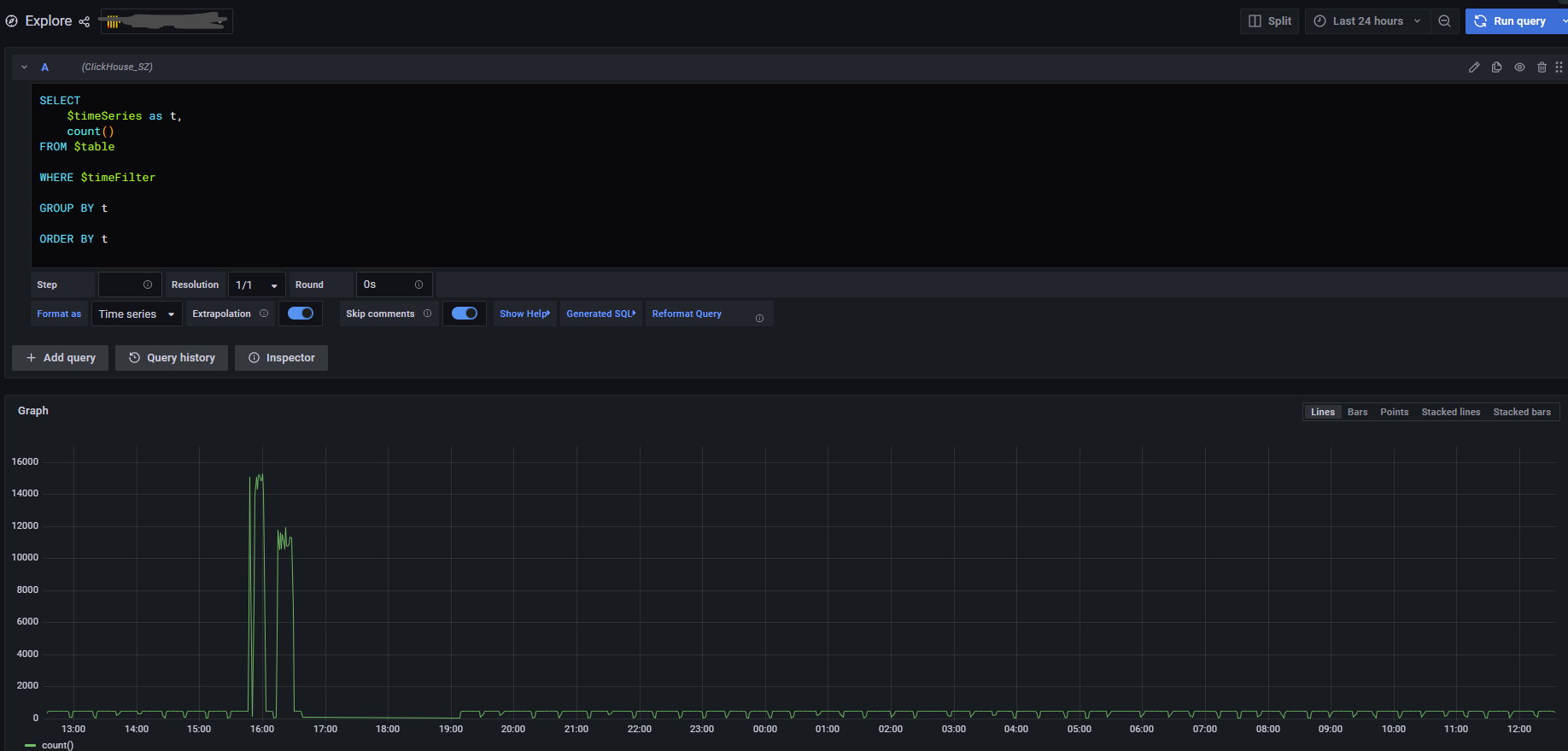

But why does Clickhouse's memory usage jitter when using Disk S3?

Is it because when the DEFAULT disk is transferred to the S3 disk, a large amount of data will be read to the memory, and then upload to the S3?

Or is it not because of the high memory caused by a large number of inserts? But it seems not, because there is not much throughput in the recent day.

So what causes memory jitter? My program is often oom because of memory overflow. Can you help me and give me directions? Thank you very much.

Reactions are currently unavailable

Metadata

Metadata

Assignees

Labels

questionQuestion?Question?st-need-infoWe need extra data to continue (waiting for response). Either some details or a repro of the issue.We need extra data to continue (waiting for response). Either some details or a repro of the issue.