Hi community,

Until now, we have seen the following satellite data access mechanisms on the blog:

GEONETCast-Americas:

HRIT/EMWIM:

Amazon / Big Data Project:

Web Interfaces:

Imagery on the web:

Let’s see another mechanism today, the Unidata THREDDS Data Server.

Advantage: The greatest advantage from downloading data from THREDDS, for GOES-16 and GOES-17 for example, is that when NOAA’s PDA (Product Distribution and Access) is out for some reason, the data will still be available on the THREDDS Data Server. PDA is the source for NOAA’s GNC-A channel and for the Big Data Project, so when it is out, everything is out. The GOES-16 / GOES-17 data available from TDS, is an exception, because its source is a GRB station.

The Unidada THREDDS Data Server (TDS)

THREDDS stands for: Thematic Real-time Environmental Distributed Data Services

According to the official webpage:

The THREDDS Data Server (TDS) is a web server that provides metadata and data access for scientific datasets, using OPeNDAP, OGC WMS and WCS, HTTP, and other remote data access protocols. The TDS is developed and supported by Unidata, a division of the University Corporation for Atmospheric Research (UCAR), and is sponsored by the National Science Foundation.

About the goal of this service:

The goal of Unidata’s Thematic Real-time Environmental Distributed Data Services (THREDDS) is to provide students, educators and researchers with coherent access to a large collection of real-time and archived datasets from a variety of environmental data sources at a number of distributed server sites. The THREDDS Data Server (TDS) is a web server that provides metadata and data access for scientific datasets, using a variety of remote data access protocols.

Please access the TDS Fact Sheet at this link.

The THREDDS Data Server Content

When accessing the TDS catalog (link), we see that there are multiple datasets available, among them Forecast Model Data (GEFS, GFS, etc), Forecast Products and Analysis, Radar Data (NEXRAD, etc), Satellite Data (GOES-16, GOES-17, S-NPP, etc), among others.

GOES-R / GOES-S content on the THREDDS Data Server

Consider the following tweet:

After taking a look at the mentioned Python Notebook, let’s try to download GOES-R / GOES-S from the GRB folders on the TDS data using Python / Siphon (two week archive from a GRB station).

Let’s take a look at the GOES-16 GRB directory from THREDDS:

There is a directory for each GOES-16 instrument:

- Advanced Baseline Imager (ABI)

- Extreme Ultraviolet and X-ray Irradiance Sensors (EXIS)

- Geostationary Lightning Mapper (GLM)

- Magnetometer (MAG)

- Space Environment In-Situ Suite (SEISS)

- Solar Ultraviolet Imager (SUVI)

And a directory for the Derived Products.

Inside each dataset directory, the following structure is found:

- Dataset: ABI

- Sector: CONUS, FullDisk, Mesoscale-1, Mesoscale-2

- Channel: Channel01 ~ Channel16

- Date: YYYYMMDD (last 14 days) or “Current” (last 24 hours)

- Dataset: EXIS

- Product: SFEU, SFXR

- Date: YYYYMMDD (last 14 days) or “Current” (last 24 hours)

- Dataset: GLM

- Product: LCFA

- Date: YYYYMMDD (last 14 days) or “Current” (last 24 hours)

- Dataset: MAG

- Product: GEOF

- Date: YYYYMMDD (last 14 days) or “Current” (last 24 hours)

- Dataset: SEIS

- Product: EHIS, MPSH, MPSL, SGPS

- Date: YYYYMMDD (last 14 days) or “Current” (last 24 hours)

- Dataset: SUVI

- Product: Fe093, Fe131, Fe171, Fe195, Fe284, He303

- Date: YYYYMMDD (last 14 days) or “Current” (last 24 hours)

- Dataset: Products

- Sector: CONUS, FullDisk, Mesoscale-1, Mesoscale-2

- Product: AerosolDetection, AerosolOpticalDepth, CloudAndMoistureImagery, CloudMask, CloudOpticalDepth, CloudParticleSize, CloudTopHeight, CloudTopPhase, CloudTopPressure, CloudTopTemperature, DerivedMotionWinds, DerivedStabilityIndices, FireHotSpot, GeostationaryLightningMapper, LandSurfaceTemperature, LegacyVerticalMoistureProfile, LegacyVerticalTemperatureProfile, RainRateQPE, SeaSurfaceTemperature, TotalPrecipitableWater, VolcanicAshDetection

- Date: YYYYMMDD (last 14 days) or “Current” (last 24 hours)

Using SIPHON to acess data from the THREDDS Data Server

Let’s see how we may access this data using Siphon, a collection of Python utilities for downloading data from the THREDDS Data Server.

First, download and install Miniconda: https://conda.io/miniconda.html

To install Siphon, let’s create an env called Siphon and install the Siphon utilities:

conda create --name siphon

activate siphon

conda install -c conda-forge siphon

Downloading ABI L1b Data

These are the required imports:

# Required Modules

from siphon.catalog import TDSCatalog # Code to support reading and parsing catalog files from a THREDDS Data Server (TDS)

import urllib.request # Defines functions and classes which help in opening URLs

Let’s start downloading L1b data from ABI. First of all, let’s understant the TDS URL structure for the ABI L1b Data:

https://

thredds-test.unidata.ucar.edu/thredds/catalog/satellite/

goes16/GRB16/ABI/FullDisk/Channel/Date/catalog.html

Considering this, let’s create the catalog url:

# Unidate THREDDS Data Server Catalog URL

base_cat_url = 'https://thredds-test.unidata.ucar.edu/thredds/catalog/satellite/{satellite}/{platform}/{dataset}/{sector}/{channel}/{date}/catalog.xml'

…and create the variables:

# Desired data

satellite = 'goes16'

platform = 'GRB16'

dataset = 'ABI'

channel = ['Channel13','Channel07']

sector = 'FullDisk'

date = 'current'

# Output directory

outdir = "C:\\GRB\\"

To download the most recent data for the selected satellite, sector and channels, use the following code:

# For each channel

for channel in channel:

cat_url = base_cat_url.format(satellite = satellite, platform = platform, dataset = dataset, sector = sector, date = date, channel=channel)

# Access the catalog

cat = TDSCatalog(cat_url)

# Get the latest dataset available

ds = cat.datasets[-1]

# Get the URL

url = ds.access_urls['HTTPServer']

# Download the file

urllib.request.urlretrieve(url, outdir + str(ds))

This is the full script:

# Required Modules

from siphon.catalog import TDSCatalog # Code to support reading and parsing catalog files from a THREDDS Data Server (TDS)

import urllib.request # Defines functions and classes which help in opening URLs

# Unidate THREDDS Data Server Catalog URL

base_cat_url = 'https://thredds-test.unidata.ucar.edu/thredds/catalog/satellite/{satellite}/{platform}/{dataset}/{sector}/{channel}/{date}/catalog.xml'

# Desired data

satellite = 'goes16'

platform = 'GRB16'

dataset = 'ABI'

channel = ['Channel13','Channel07']

sector = 'FullDisk'

date = 'current'

# Output directory

outdir = "C:\\GRB\\"

# For each selected channel

for channel in channel:

cat_url = base_cat_url.format(satellite = satellite, platform = platform, dataset = dataset, sector = sector, date = date, channel=channel)

# Access the catalog

cat = TDSCatalog(cat_url)

# Get the latest dataset available

ds = cat.datasets[-1]

# Get the URL

url = ds.access_urls['HTTPServer']

# Download the file

urllib.request.urlretrieve(url, outdir + str(ds))

'''

OPTIONS:

satellite:

goes16

goes17

platform:

GRB16

GRB17

dataset:

ABI

EXIS

GLM

MAG

Products

SEIS

SUVI

product:

EXIS: SFEU, SFXR

GLM: LCFA

MAG: GEOF

SEIS: EHIS, MPSH, MPSL, SGPS

SUVI: Fe093, Fe131, Fe171, Fe195, Fe284, He303

Products: (please see below)

product:

AerosolDetection

AerosolOpticalDepth

CloudAndMoistureImagery

CloudMask

CloudOpticalDepth

CloudParticleSize

CloudTopHeight

CloudTopPhase

CloudTopPressure

CloudTopTemperature

DerivedMotionWinds

DerivedStabilityIndices

FireHotSpot

GeostationaryLightningMapper

LandSurfaceTemperature

LegacyVerticalMoistureProfile

LegacyVerticalTemperatureProfile

RainRateQPE

SeaSurfaceTemperature

TotalPrecipitableWater

VolcanicAshDetection

sector:

CONUS, FullDisk, Mesoscale-1, Mesoscale-2

channel:

Channel01 - Channel16

date:

current (last 24 hours)

YYYYMMDD

'''

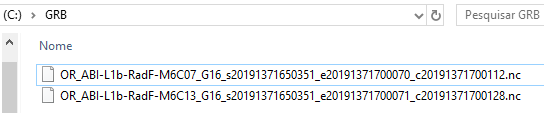

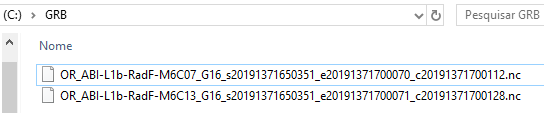

In the selected “outdir” (in our case, “C:\GRB\”), you’ll see the files downloaded from TDS.

Stay tuned for news.

Tópico: Disponibilidade dos dados de sondagem NUCAPS da NOAA

Tópico: Disponibilidade dos dados de sondagem NUCAPS da NOAA Tema: Disponibilidad de los datos de sondeo NUCAPS de NOAA

Tema: Disponibilidad de los datos de sondeo NUCAPS de NOAA