The eMOTIONAL Cities project has set out to understand how the natural and built environment can shape the feelings and emotions of those who experience it. At its core lies a Spatial Data Infrastructure (SDI) which combines a variety of datasets from the Urban Health domain. These datasets should be available to urban planners, neuroscientists and other stakeholders, for analysis, creating data products and eventually making decisions based upon them.

Although the average size of a dataset is small (with few exceptions), scientists often want to combine several of these datasets in the same analysis, which creates a use case where we could benefit from format efficiency. For this reason, we recently decided to offer GeoParquet as an alternate encoding for the 100+ vector datasets published in the SDI.

What is GeoParquet

For those who have been distracted, GeoParquet is a format which encodes vector data in Apache Parquet. There is no reinventing the wheel here: Apache Parquet is a free and open-source column-oriented data storage format, which provides efficient data compression and encoding schemes with enhanced performance to handle complex data in bulk; GeoParquet is just adding the spatial support on top of Parquet, leveraging the fact that most cloud data warehouses already understand it to achieve interoperability. Although GeoParquet started as a community effort, it is now on the path to become an OGC Standard and you can follow (or even contribute to) the spec on: https://github.com/opengeospatial/geoparquet

GeoParquet extends Parquet by adding some metadata about the file and for each geometry column; the number of mandatory columns is kept to a minimum, with some nice-to-have optional features.

Converting & Publishing the Data

Although a relatively new format, GeoParquet already spots a vibrant ecosystem of implementations to choose from. After a few experiments, we decided to use the GDAL library to convert the datasets, as it integrates better with our existing pipeline.

It should be noted that our source datasets are hosted in a S3 bucket in GeoJSON format and the idea was to place the GeoParquet files also in S3, so the idea was to read/write the files directly from S3.

Our pipeline uses GDAL wrapped in a bash script, which reads all the GeoJSON files in a folder on a S3 bucket and places the resulting GeoParquet files on a different folder in the same bucket.

It should be noted that in order to support GeoParquet, GDAL > 3.8.4 should be used; to make things easier, we run GDAL with the right GDAL version from a docker container. The script is available in the etl-tools repository of the eMOTIONAL Cities project with an MIT license.

The GeoParquet files were validated directly from the S3 bucket using the gpq, a lightweight tool written in GO, which creates as well validates GeoParquet files. The gpq cli was wrapped in another bash script, available here. It should be noted that no validation errors were spotted from the created GeoParquet files.

In order to make the GeoParquet datasets discoverable, they were added to each collection record of the eMOTIONAL Cities catalogue using an item type “application/vnd.apache.parquet”, which can be negotiated by clients. See an example below for the hex350_grid_obesity_1920 collection.

{

“rel”:”item”,

“type”:”application/vnd.apache.parquet”,

“title”:”GeoParquet download link for hex350_grid_obesity_1920″

},

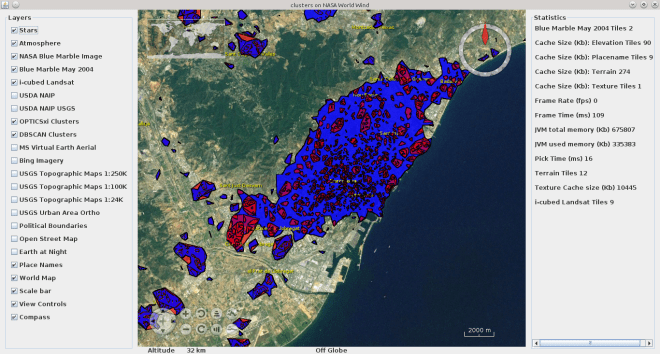

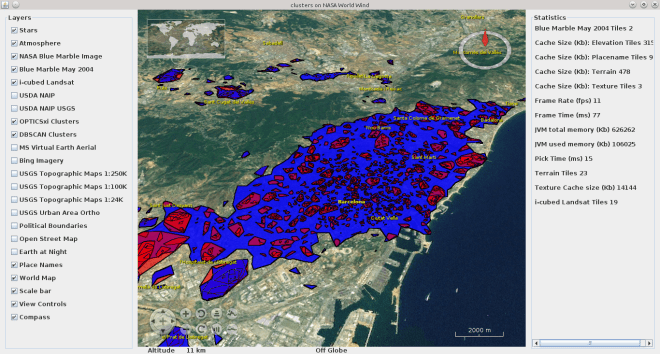

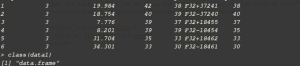

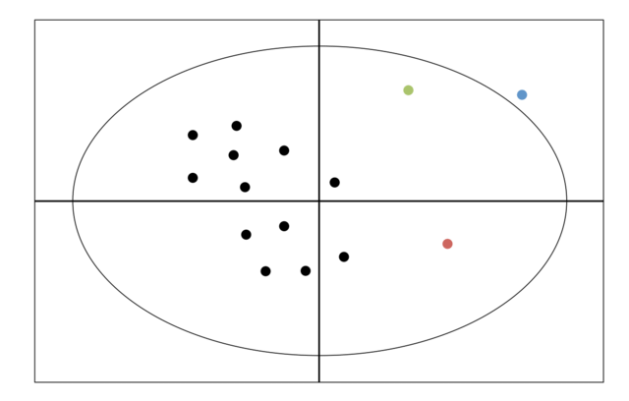

The chart above shows the size of one of our largest datasets (activity_level_ldn) in different formats. GeoParquet translates into a smaller size, even when compared with binary formats such as Shapefile or GeoPackage. These smaller sizes, specially when multiplied by a large number of datasets, will translate in cost saving for hosting data; they will also provide a better experience for users which stream these datasets over the web for the purpose of analysis.

The chart above shows the total size of eMOTIONAL Cities datasets in various formats.

Socializing the Results

Although the GeoParquet files are discoverable by machines through the OGC API – Records catalogue, more work needs to be done in order to ensure that humans are aware of them. These are a few initiatives that we did, or plan to do, in order to socialise these results and encourage users to leverage the geoparquet datasets that we expose in the SDI:

- May 2024: GeomobLX – Lighting Talk about “GeoParquet”.

- October 2024: eMOTIONAL Cities webinar about GeoParquet (TBD)

- December 2024: FOSS4G World – “Adding GeoParquet to a Spatial Data Infrastructure: What, Why and How” (submitted talk)

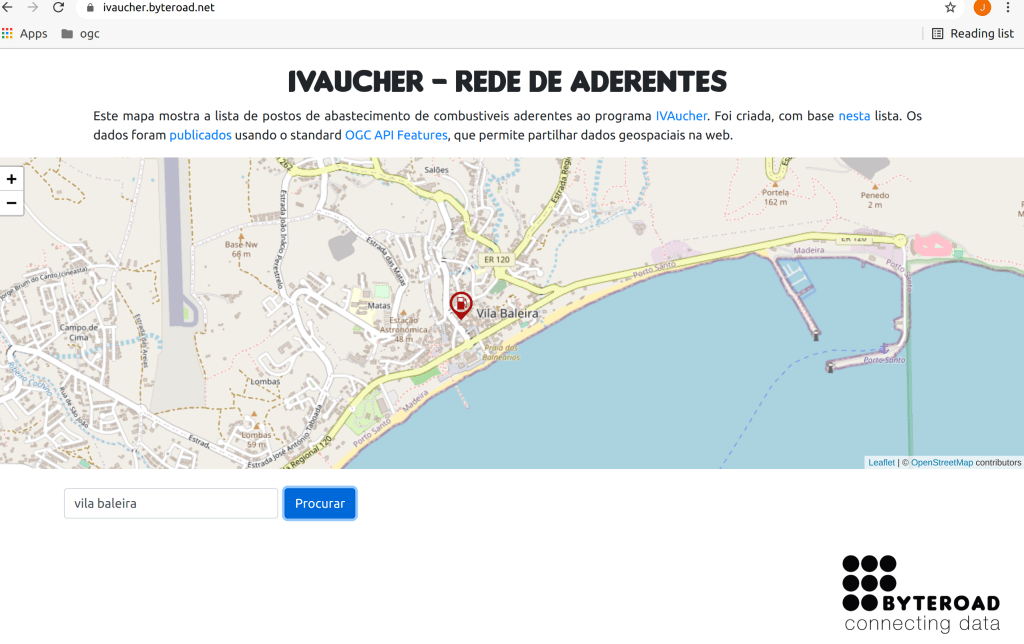

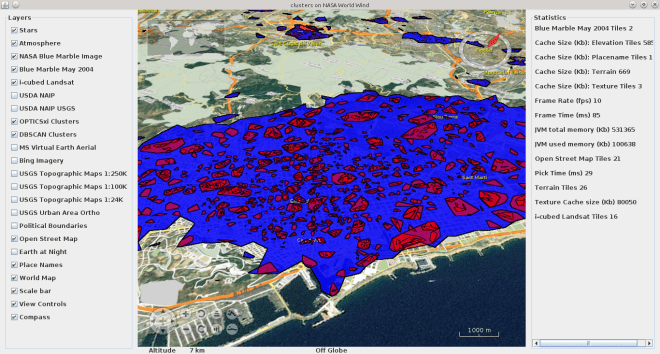

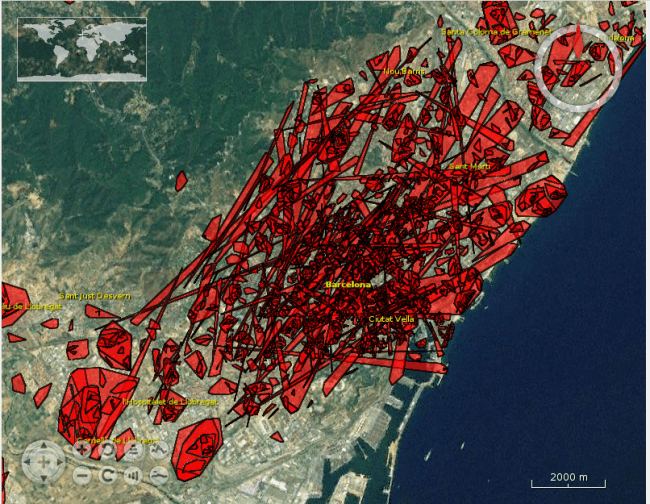

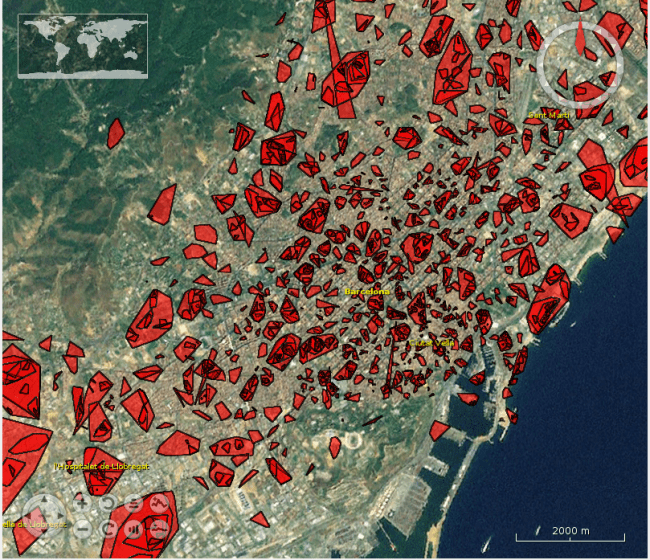

The image bellow shows an eMOTIONAL Cities GeoParquet dataset in QGIS. The out-of-the-box support in widely used tools like QGIS, is one of the most exciting things about GeoParquet, but we need to make sure that users know about it.

If you are curious to get your hands on some nice examples, the entire eMOTIONAL Cities catalogue is available to you. In each metadata record, you will find a link to the corresponding geoparquet file, which you can download locally or stream to your jupyter notebook.

This blog post ,and the work leading to it, was possible with the collaboration of my colleague Pascallike. A Big Thanks to him!