Ramblings of a retired data architect

Let me start by saying that I have been working with data for over thirty years. I think that just means I am old. Anyway, I have written blog posts, delivered presentations, and authored books on these languages through the years. Understanding and using these languages have grown and shaped my career through the years. I thought it would be fun to discuss my thoughts on each one. This is my take and some of my thoughts are definitely “tongue in cheek.” So, enjoy the ride and feel free to share your take in the comments.

- SQL – ubiquitous and relational

- MDX – complex and dimensional

- DAX – formulaic and columnar

- Thoughts and musings

SQL, structured query language

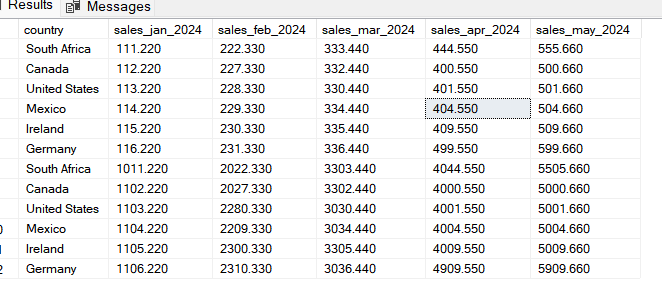

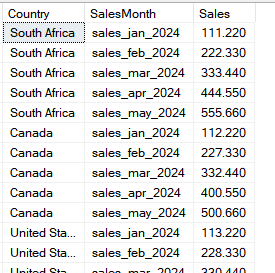

SQL is the oldest of the languages. It was designed to support relational databases (RDBMS). It is built on math principles to improve performance and optimize storage. Normalization rules were established to guide developers on the preferred approaches to building databases.

Why is SQL ubiquitous?

SQL is everywhere. SQL is the query language of choice for enterprise data platforms such as Microsoft SQL Server and Oracle. Open-source data platforms like MySQL and PostgreSQL are also built to use SQL.

How is this possible? SQL is an ANSI standard. This means that the core of the language is managed by a governing body. If you learn SQL, you should be able to write queries in all these databases, right? Sort of. You should be able to write a query like this in all the databases: SELECT field1, field2 FROM table WHERE field3 = 50.

However, vendors often implement their own variations of SQL to meet needs in their platform design or to provide nonstandard functionality to their users. (Engineering outpaces standards development.) For example, Microsoft created TSQL and Oracle created PL/SQL. One of my first experiences with this was returning a single row in query. I used TOP 1 in SQL Server, but there was no TOP keyword in Oracle.

Code examples

SQL Server

SELECT TOP 1 column1, column2

FROM table_name

WHERE condition;Oracle

SELECT column1, column2

FROM table_name

WHERE condition

AND ROWNUM = 1;PostgreSQL / MySQL

SELECT column1, column2

FROM table_name

WHERE condition

LIMIT 1;What does it all mean?

You can learn SQL and be efficient querying data from multiple data platforms. Whether you are a data engineer or a data analyst, you must know SQL if you are to be taken seriously as a data professional.

MDX, multidimensional expressions

I was introduced to MDX by SQL Server Analysis Services (SSAS). For me, it just clicked. More about that in a bit. MDX, like SQL, is heavily based on math. Whereas SQL is two dimensional (column and rows), MDX can theoretically use an unlimited number of dimensions. The number is limited in practice by the capability of the data platform. MDX was primarily used by two vendors, Microsoft and Hyperion.

One key difference between the two platforms is their purpose. Relational databases are optimized for transactions and small result sets. Multidimensional databases are built for analysis across huge datasets.

Why is MDX considered complex?

The toughest part for most data professionals is visualizing multidimensional datasets in their minds. Relational data is easy to visualize. It looks like a spreadsheet. Multidimensional data is not that simple. We call it a cube, but that is a simplistic representation with only three dimensions. It is a cool name though.

Earlier in my career I coached data consultants on their transition to BI consultants. As I helped a consultant with MDX, I told him at some point he would “get it.” I told him to call me when he did. Six months later he called me, told me that he got it, and hung up on me. Many consultants didn’t get it and either just forced their way through it or went back to relational.

MDX was designed to traverse dimensions, build sets, and aggregate values across those sets. I mentioned earlier that MDX made sense to me right away. The first time I was exposed to MDX, I learned about the various functions and methods to work with dimensions including child, parent, descendants, and ancestors. You could think of dimensions like family trees. I took a class in college about familial relationships which used similar concepts. My degree is in cultural anthropology.

- Another difficult concept to master is context. You must understand the set or slice of data you are working with in a query.

- Once you understand context, you need to realize that every measure is an aggregate of every dimension whether a part of the query or not.

- Results can be shaped in multiple dimensions. Three or more dimensions cannot be visualized. If you want to visualize the data in a report, it needs to be formed into columns and rows.

Multidimensional databases and MDX are extremely powerful but complex. I enjoyed working with them and became one of the few experts in the technology. However, multidimensional databases and MDX are rarely used today. Microsoft is not advancing the technology, instead promoting columnar data structures.

DAX, data analysis expressions

My first experience with DAX was when PowerPivot was released with Excel. It was then that I saw the writing on the wall for MDX. DAX is simpler and more approachable than MDX. Microsoft then added tabular models built on the same data engine, Vertipaq. Eventually culminating in the Power BI model. The underlying data engine is a highly optimized columnar data structure.

Admittedly, I have the least amount of hands-on experience with DAX. However, I disliked it early on. Unlike SQL and MDX, DAX is not built around math principles and is not a query language. It is built with expressions. Instead of SELECT, it starts with an equal sign (=). This is more intuitive for Excel users. Early on, it was very frustrating for me.

DAX is continually being improved. Microsoft is also continuing to improve the underlying data engine and storage subsystem. Power BI models are one of the foundational building blocks of Microsoft Fabric.

Should you learn DAX?

If your business uses Power BI, then yes. DAX is used to aggregate, shape, and format data for usage by end users. It is not necessary for data engineers who don’t present data to end users.

Thoughts and musings

My first recommendation is to learn SQL if you want to be taken seriously as a data professional. It has been around since the beginning and will be around for a while to come. Relational data platforms are integrating columnar data storage technology which gives SQL users access to the performance available in Power BI models.

While I contend that MDX is more powerful, I concur that DAX is more approachable. As MDX goes the way of COBOL, SQL remains the powerhouse. Learn DAX if you intend to use Power BI, otherwise don’t bother.

That is my 2 cents. Have a different opinion? Sound off in the comments below.