I have been active in the data community throughout my career. I have met people and made friends in the process. As I look back on it, I am thankful I was involved and participated. I firmly believe you should as well.

Contents

- The value of data communities

- Why you should be a contributor

- Write about it

- Talk about it

- Are you ready to ramble?

The value of data communities

I want to kick off this section with my experience with community. Then delve into the value of being involved in the data community.

A little history

When I started my consulting career in SQL Server nearly 25 years ago, I was a newbie. I came from a background in Microsoft Access. At some point, I got connected with some other SQL Server professionals in Minnesota. We decided to create a user group – Minnesota SQL Server User Group. Eventually, we joined PASS.

This was my first experience in the data community. I made many friends in this community. We supported each other’s skills and career growth. We helped each other with technical issues and shared technical wins.

The value of community is community

You should definitely participate in communities. The best option is to take the time, usually once a month, to engage in community. Meeting in person is preferred because you can focus on the people and the topic. Talk to each other. If you join a virtual group, participate! Don’t do something else during the meeting. Engage in the comments, Q&A, and any banter. While virtual group meetings are more convenient, the onus is on you to interact. If there is no option to interact with each other, it is a webinar, not a user group. Find a user group.

The value of the data community is the community. Yes, we can and will learn from each other. Knowing and networking with peers leads to more growth and maturity as a person and a professional.

Why you should be a contributor

Simply put, if everyone is consumer, the community dies. The community is not intended to be a school with a couple of teachers and a bunch of students. In a true community, we are all contributors. Every user group has consumers and contributors. To be clear, not everyone who contributes leads the group or gives talks. Some ask questions, others stick around for discussions, and some extend a hand to welcome others. Consumers come, listen, and leave. Introversion is not a good excuse. Some of the best contributors I know are introverts.

Storytelling

We all have a story to tell from “it’s all new to me” to “I have been doing this for 20 years.” Everyone can give back to group through questions, advice, inclusion, and insights. The key is proactively engaging and including. In this way, we build friendships, grow careers, and expand horizons. You miss out on all this if you only consume.

I am going to expand on two specific types of contributions in the remainder of this post – writing and talking. These are two ways to tangibly contribute to the community.

Write about it

When you write it down, you will remember it more. Writing for others forces clarity, accuracy, and defensibility. I call this “writing with CAD.” When we write to share with the community, we are compelled to write this way.

Clarity

Writing for others forces us to be clear about the topic. We need to organize our thoughts and write with purpose. We have to answer questions about what we are writing and does it make sense. I think this includes good editing. I highly recommend using tools that check grammar and spelling like Microsoft Editor in Office and Edge. Be careful using tools like Grammarly and Copilot. Use AI to clarify thoughts and not generate them.

Accuracy

Accuracy is especially important in technical writing. We should never assume our readers can fill in the blanks. If you’re writing a step-by-step blog, make sure you have all the steps. Be sure to include any context or assumptions. While we cannot guarantee that we didn’t miss anything, we should do our best to be precise so our readers can reproduce, practice, or implement what we are writing about.

Defensibility

Are you prepared to defend what you are writing about? I don’t mean this negatively. You should be able to explain why you did it that way or why you think you are correct. Sometimes this is part of the comment or post. Other times you just need to be prepared to answer questions. Defensibility is about being prepared.

To be clear, defensibility does not mean you are always right. Be prepared to hear new ideas and accept corrections. You can’t and won’t know everything. But it is important to know your “why.”

My motivation to write

When I started to blog, I made the mistake of writing for others. I made a decision early on to change the goal of my content. No longer would I try to write about what I think people would read. I decided to write for me. My technical writing became my personal knowledge base. If no one reads it, oh well. It was the best decision I made, and I recommend it for new bloggers all the time.

One great example from my writing is my series on Excel. I was embedding Excel workbooks into SharePoint. The workbooks were backed by SQL Server Analysis Services cubes. The goal was to make elegant dashboards without looking like Excel. This tips and tricks series has many of my most read posts and some are still being viewed today. I wrote them as a reference for me, and others still find them helpful.

Next, I want to look at some ways you can contribute to the community through writing. It’s not just about blogging.

Where to write

If you’re interested in starting to write, here are some good options. If you’re already writing, maybe try something new.

Blogging

This is where I started in 2010. However, it’s not necessarily the easiest option. There are many decisions to be made to launch a blog.

- Where to host it? I use and like WordPress. We are still using the free version. I have seen blogs on Medium lately. Check out this article on the best options for free blogging platforms.

- What to call it? You can be creative here.

- Deciding on your first article.

- Where to promote it? LinkedIn, Facebook, X?

One reason I like blogging is that I own and control my content. I can point people to my blog, and it is clearly my work.

LinkedIn articles

LinkedIn articles can be a nice way to start writing. You can also promote a newsletter for people to subscribe to. It comes with a built-in promotional platform. You are on LinkedIn after all.

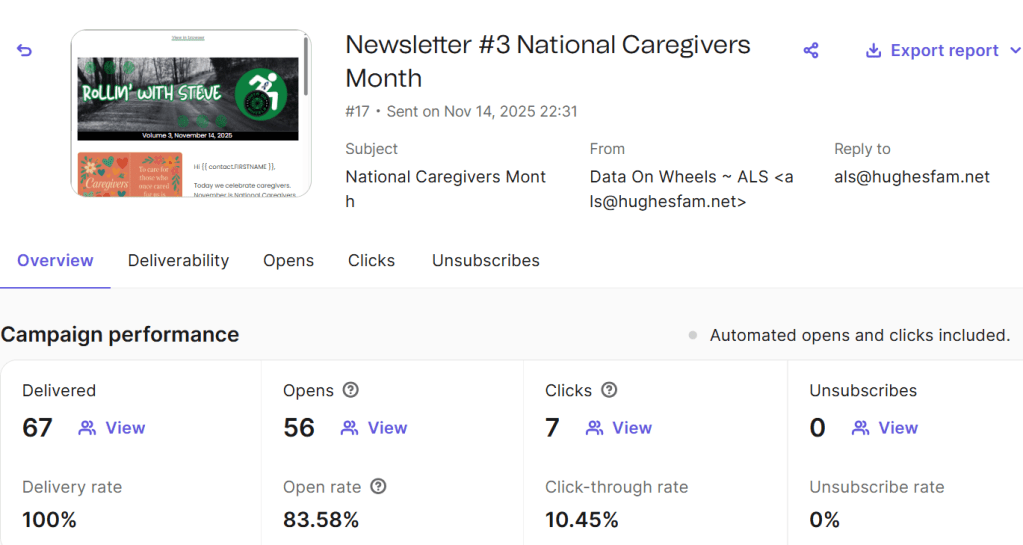

Data on Rails

Not sure where to start? We have a shared blog site where you can write a couple of posts to see if you like blog writing. We will promote your work as well.

If you like it and want to start your own blog, great! We encourage you to take your content to help kick it off. You are always welcome to keep writing here if you prefer to.

Commenting

The last area I want to cover is commenting. This is a great way to piggy back onto topics, content, or questions posted by others. You can share your thoughts, insights, and stories with others easily. Some of these can serve as prompts for your own content. Here are a few options for joining the conversation.

- Microsoft forums

Talk about it

Most technologists are afraid of public speaking. Even now some of you are getting queasy just thinking about it. But talking about what you know and what you are learning is a great way to give back to the community. Speaking on a topic requires you to succinctly describe what you are talking about.

I have used speaking opportunities to share my experience with a product, pattern, and code. What I have found is that I have gaps to fill in about the topic. So, I learned more than I knew before I started.

I know getting started can be hard. Here is a pattern that may be helpful.

- Choose

- Prepare

- Practice

- Present

- Improve

Let’s break these down.

Choose

When you start out, choose something you are familiar with and think is cool. You should be excited and comfortable with your topic. Also, try to be concise. A narrow topic is easier to prepare for.

Prepare

This is where most people get stuck. They start out with the wrong questions. What do I need? A presentation? A demo? Wrong questions? What you really need is the outline. An outline will help you stay focused. Start out by identifying three, no more, no less, points to make about your topic. I would recommend writing them down.

Once you have them ready, fill in the blanks.” What do you want to say about each point? Do you have sample code? A picture of a whiteboard? Lessons learned? Compile these into a document. Now you can expand your outline. It could look something like this :

- Topic: working with window functions in SQL Server

- What are window functions

- What they do

- Why I needed them

- How to build one

- Sample code

- Define key functions

- Partition

- Over

- Order by

- Aggregation

- Sample code of my use case

The presentation

Now you can build a presentation. You should start a slide deck with the following slides.

- Title. Includes topic and your name.

- Who you are. Name, role, something interesting about you.

- Introduction. Topic, why the topic interests you.

- First point.

- Second point.

- Third point.

- Lessons learned. How did it help you? Or a quick summary about how to use it.

- Thank you. Q & A, references.

You may need extra slides for some of your points, especially if you have sample code or diagrams. Don’t add to many extra slides. Remember that the slides support what you are talking about. If want to read something, use a printed document. DO NOT JUST READ YOUR SLIDES!

Practice

People practice in different ways. You should try a couple to find what works best for you. Whatever works for you is the right way for you to prepare. Here are some examples that you could use:

- Practice in front of a mirror

- Use PowerPoint’s timing feature

- Run through it with a friend

- Do a dry run with an experienced speaker

- Rehearse in your head

Present

You get to do your presentation. Exciting!

Improve

After your presentation be critical of your presentation, in a good way. We can always improve. If the event has reviews, use to make improvements. Don’t try change everything, focus on one or two things.

Demos are risky. If something goes wrong, have a backup ready. You don’t want to troubleshoot your demo live. I had slides with screenshots ready to go. Everyone has demos fail. I don’t recommend demos for your first presentation.

Where to speak

Next, we will look at some good opportunities to speak at.

User groups

User groups are a great opportunity to speak to a friendly audience.

Lunch and learn

Lunch and learns are an informal way to get comfortable talking about your topic. Usually, you do these with your peers at work or with a client.

Small conferences

SQL Saturdays, Days of Data, and Data Saturdays are examples of small conferences. These are the next step after user groups.

Calls for presenters

You can find many opportunities to present when you are ready to stretch your wings here.

Career growth

While community involvement benefits the community, it also benefits your career. Not only can you build up your resume, but you can also build up your professional network.

Are you ready to ramble?

Well, if you made this far, I hope I have inspired you to get involved. Many people have started out small and grew their professional career using these activities. Everyone can contribute, even you. Let us know what you do in the comments. We would love to hear from you.

Ramblings of a retired data architect

Let me start by saying that I have been working with data for over thirty years. I think that just means I am old. I thought it would be fun to discuss my thoughts on various topics. This is my take and some of my thoughts are definitely “tongue in cheek.” So, enjoy the ride and feel free to share your take in the comments.