I’ve been experimenting on and off with astrophotography using a DIY Raspberry Pi setup. This article is another attempt at getting a better working setup, and sort of brings together much of my previous experiments. Let’s quickly walk through them again:

- In astrophotography from a beginners perspective (part-3: achievements) I take my first steps on building a Raspberry Pi 1 based remote capture device using a Innomaker CAM-MIPI462RAW camera sensor. The sensor is low resolution and not the best build quality, but it’s really cheap and therefore a reasonable OK starting point for my experiments. It was a good starting point, but the labor of running commands manually on a remote device, and moving pictures over to my host PC manually is far from the best experience. I also noticed that image quality was a bit lacking when the gain was increased. Plus wireless networking tend to be really tricky on the RPI.

- In the next article I tried to tackle the issue of not having decent remote control software. In using a raspberry pi and indi for astrophotography I explored the option of running everything through Indi. Indi is a software bundle that supports various astrophotography devices such as cameras, sensors, telescope mounts, filters, etc and allows the controlling host to automate and control things over a computer network. I was pleased with this upgrade, although stability was not quite good back then.

- At this point I had a networked camera sensor that is controllable using Indi. In the next article, exploring imx462 sensor settings in dark scenes, I wanted to look at what image quality I currently had, and how I could improve it (because it did lack in some areas). I found out that there is this HCG mode that can be used, and together with some other folks in the RPI community this functionality was finally added to the kernel driver. Furthermore, the IMX462 also got it’s own libcamera tweaking, and all of these changes have in the mean time found their way into the default Raspberry Pi OS distro. For now I’m not using the HCG mode for my astro shots as I tend to avoid bumping up the gain over using longer exposure times, but at one point in the future it may become useful again.

- By the end of 2024 I starting looking at solving the imx462 banding issue. It appears the Innomaker IMX462 lacks proper analog power supply filtering when combined with a Raspberry Pi, but things can be improved by adding an CJMCU-3042 based LDO in between. I guess this is where it really pays of when you buy a quality camera sensor from the beginning.

- A bit later I had a quick look at what an IR filter would do for the IMX462, see sony imx462 ir sensitivity. It doesn’t play a particular role for astrophotography, but it’s good to know that the IMX462 does have some sensitivity in that area that could be used.

Connectivity issues

That’s where I had everything on hold for a while. I have the tools there but actually never got it to run outside due to lack of time, the lack of a more water proof housing and actually not having any decent quality network outside of my house. Whenever I made my setup battery powered for some outside tests, I always ran into issues with Wifi connectivity. I tried both the RPI’s internal Wifi as an external USB power Wifi adapter but found no decent solution. I even played around with building a Bluetooth based solution where I had an Android app connecting to the RPI using BLE. That however came to roughly the same drawbacks, plus that BLE was way to slow to copy large RAW image files over to the Android phone. My next idea was to bring network to my back yard garage using a Wifi client router, and from there on wire the network to the RPI over an ethernet cable. I also have electricity back there so I wouldn’t need any batteries. And that actually worked quite well! But now the camera is in the back of my garden and I’m no longer running it close to where I sit with my laptop…

Housing ideas: the all sky camera

Next step was thinking about an actual housing for my remote camera. I’ve been looking at allsky cameras (and indi-allsky alternatives) for a while to see if it would actually fit my use case. I would have to ditch the option of having the ability to mount a telescope lens adapter. But on the other hand I’d instead improve the quality of non-zoomed night sky pictures. AllSky cameras come with dedicated software. The indi-allsky software has a lot of features to offer that EKOS does not have. For example it can automatically generate keograms, star-trails (using stacking), videos, and also integrates various sensors that can be used in the software. It’s pretty straightforward to install (example tutorial: https://astroisk.nl/install-indi-allsky-on-a-raspberry-pi-5/). By default it runs on the remote camera device (the RPI), but you can also separate the control software blocks from the actual camera server so it seems (similar to how EKOS controls my Indi based camera). Interested? Look here: https://github.com/aaronwmorris/indi-allsky/discussions/1259.

But while an Allsky camera has several things to offer, I’m actually not looking into any of its features, except maybe the image stacking part. So far I haven’t found any decent description on what it does, outside of producing star-trails, so that’s why I decided I’ll avoid setting up the software and for now stick to a plain Indi camera combined with EKOS control software. But there are many people who have already tried building a similar camera themselves, so you can easily find some building plans or ideas on how to successfully build one yourselves.

The basic materials:

- RPI 3

- the more recent, the better, as the higher computational power decreases latency significantly

- Camera sensor with lens

- Typically for AllSky camera’s a wide angle lens is used, as you want to capture the entire sky at once. I went for a more narrow field of view (55°) so that I could get some extra details in the areas of interest. The narrow FOV also helps in the stacking process as not half of my house is also dragged into the image. Furthermore I also removed the IR filter from the lens since the IMX462 does have some IR sensitivity, so that could be a benefit.

- Some sort of power supply or batteries

- Network connectivty (Wifi, ethernet)

- A plastic dome

- A housing

Extra’s:

- temperature/humidity/dew reporting : To get an idea on what’s happening temperature/humidty wise within your remote device, various sensors can be used. I added a HTU21D temperature/humidity sensor, grabbed open source example software to read out the sensor, but also assembled a script to calculated the dew point and log the CPU temperature. For now it still has to be executed manually, but it’s a starting point that give me some rough insights as currently I’m totally blind. The source code can be found here: https://github.com/geoffrey-vl/linux-htu21d.

- dew heater : many people try to run their setup unattended throughout the whole year, even having to counter freezing conditions. Dew (and moister) are some of the things that you’ll definitely have to challenge in such condition. The thing with adding a heater is that you need to produce a very specific amount of heat. It’s not just tossing in the biggest resistor that you have and run it all out. You have to calculate the dew point, see what heat the CPU already disposes into the housing, and then think of the additional heat that you need to produce to overcome moisture and dew from building up. Moisture builds up inside the camera housing so you should try to keep the camera as air-tight as possible. Dew is formed on the outside of the housing, on the acrylic dome where the camera looks through. The reason that you don’t want to add a random amount of heat into the camera housing is that you still want you sensor to be as cold as possible. You also need to think of some controlling software that makes sure that the heater isn’t working when there is no need for it, for example during summer nights, or throughout most of the day. For now it’s summer here and I’ll try to avoid running it in bad weather, so I’m not installing any heater. But it may become a challenge later on.

- overheating : for now EKOS/Indi does not seem to have any kind of generic heat protection mechanism. At least not when you only have a simple camera sensor implemented. The CPU should throttle though though the kernel driver, and I’ll keep an eye on it using my custom script. What I avoid for now is running the camera outside during the day, as the image sensor is not protected against direct sun light, and neither do I have any active cooling installed. The drawback is that I’ll have to take out the setup manually for each capturing session, basically like setting up your telescope. But luckily this one is going to be a lot more compact.

- lens control : I have one of those dirty cheap M12 Arducam lenses mounted that you have to manually turn into focus. It’s cheap, compact, but lacks some automation features. You can also obtain lenses with focus control. Furthermore you can also toss in iris control into the mix. Why is that useful? Well, the indy-allsky software for example can automatically generate a darks library. That however implies having to cover up the camera sensor. This can sort of be done using a motor controlled iris.

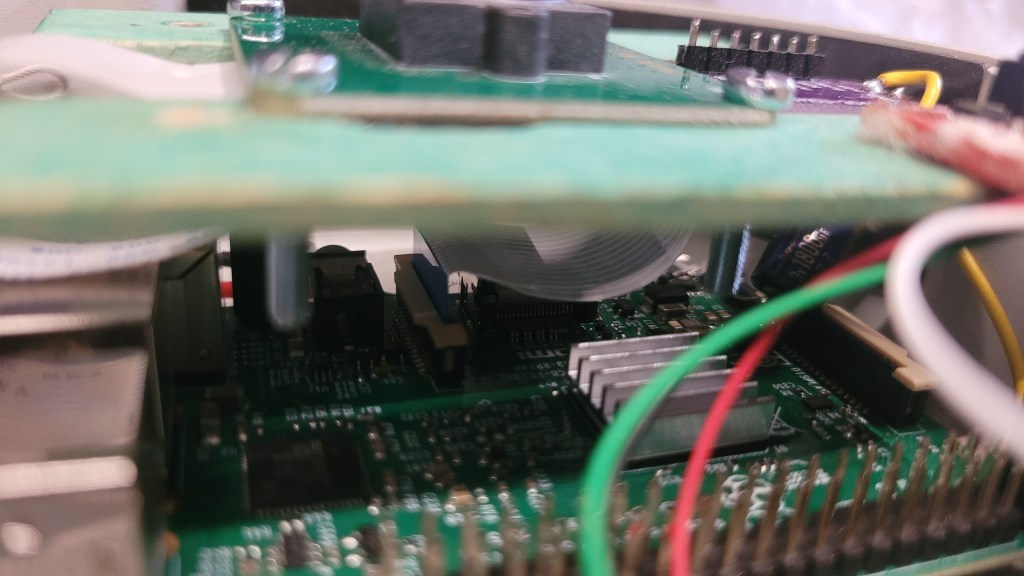

Housing ideas: repurposing an old IP security camera

I recently got to dismantle an old IP camera and found that the Raspberry Pi based camera could actually fit. I had a quick look on what makes up the IP camera and try to learn a thing or two, but found that I could not re-purpose much from this decade old device except for the housing. So I teared everything out and starting fitting my parts into it.

So there you have it, the RPI3, a IMX462 sensor, the additional analog power supply based upon the CJMCU-3042, and a HTU21D temp/hum sensor. Everything is wired over ethernet to the rest of my home network.

I added a small cooling plate to the Pi’s SOC, just in case. I also placed the HTU21D a bit closer to the actual image sensor.

After some quick tests in the evening I noticed how the images captured by the dome had areas lit in green. It was as if you’d see the northern light, but that clearly isn’t possible in the area where I live. (at least not to that amount). So it turns out that some of the Pi’s LEDs lit up strong enough to be reflected via the acrylic dome back into the image sensor. My fix was to add a white painted cardboard which blocks the LEDs light from reaching to the outer end of the dome.

This already sorted some of the green light issues, but more tweaking needed to be done. I added some extra cardboard and now the picture quality was finally going into the right direction. Only the image sensor is exposed to the dome, together with the HTU21D environmental sensor.

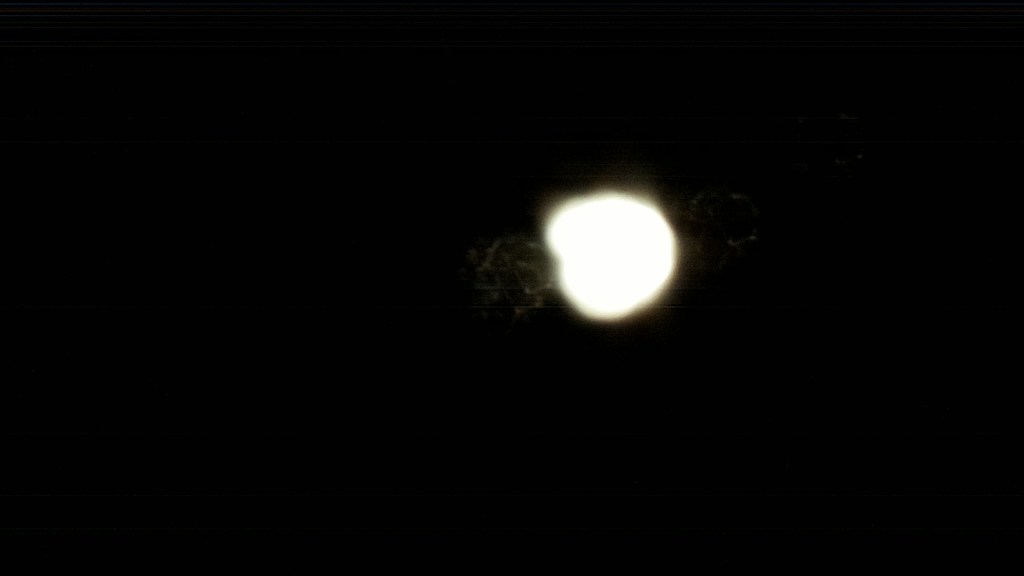

The acrylic dome is not scratch free, but let’s give it a show anyway and see what comes out. My first shot:

Dark was still settling, but luckily the moon hasn’t risen yet, and so even with an exposure time of 1s we can already spot some stars. Next step is to wait an hour or so for the sky to become darker. Then I’ll try to capture multiple shots and see if we can produce a higher quality picture by using stacking. So let’s setup EKOS for this purpose:

I’m aiming for 30 shots at an exposure time of 10s. Here is one of those captures, slightly tweaked in Gimp:

Sweat, and that’s just a single frame! I’m pretty pleased with this output already! And let’s toss in some kudos for the automated process in EKOS. Software stability wasn’t entirely perfect over the last few days, but during this session it was working quite well. Capturing all 30 shots unattended went butter smooth, and during this session I had time for myself to do some other stuff (like editing the above picture) while EKOS was running in the background. Very cool! The entire capture process took about 42 minutes, which is not exactly close to the 30 x 10s exposure time (thus 5 minutes in total) that we configured. The Raspberry Pi 3 isn’t the fastest device around to perform camera captures (we already learned that from previous experiments), but also fetching the raw files takes a bit extra longer than expected due to the wireless network link somewhere along the way between the RPI and host PC. During the session I checked the temperatures of my camera but everything was well within limits:

CPU Temperature: 42.39 °C

Housing Temperature: 22.21 °C

Housing Humidity: 44.32 %

Housing Dew Point: 9.48 °C

And here is the result of stacking those images using the Sigma Clipping pixel rejection method, and the Image Pattern Alignment registration method… not exactly an improvement…

Again, but this time using Global Star Alignment for registration:

That’s already way better, but not really enhancing the quality of a single frame at 10s exposure. Lets play around a bit more with Siril (for alignment and stacking) and Gimp (for saturation, level control):

This is actually starting to look real nice! During the 10s exposure I already noticed some brighter and darker areas in the resulting image, but I was doubting that this would be Milky Way as generally it’s impossible to see it with the naked eye. So clouds maybe… But now, when I examine all the collected 10s frames it seems to rotate together with the rest of the stars, while clouds tend to be sliding over in a random direction. So that’s indeed the Milky Way right there!

Next I wanted to push even further by bumping the exposure higher and collecting more frames. The moon is still set and skies are clear, by some luck this is actually a good night for astrophotography. At about 15s exposure is typically the maximum that you can set before you’re loosing sharpness due to earth’s rotation. Stars tend to leave star-trails instead of being a sharp dot. This will however significantly increase the time for the RPI to collect a single frame, so when I push the total amount of frames I also want to make sure I finish in time before the suns starts to rise again. So let’s go for 50 frames in total, which in my case resulted in total capture time of about 1h30min. The good thing is that EKOS is doing all the work for me: I could just go to bed and wake up next morning with all the data just there waiting for further processing.

So here is a single 15s exposure shot:

The detail is noticeable better than the 10s exposure shot. It also includes the Milky Way, but you can see that it has rotated quite a bit compared to the pictures from the previous session. (Well, actually it’s the Earth that rotated…) So let’s again do some stacking and image editing:

The registration process unfortunately only accepted 21 of the 50 pictures, so we’re now at full potential here. But compared to the single 15s shot we can easily spot a lot more details. And… is that a galaxy right there at the left side? Let’s zoom a bit more into detail. Unfortunately the IMX462 is just a low resolution camera so we’re really limited on the digital zoom. This is where we run into the limits of this low-end camera, but that’s also something I knew from the very beginning when I selected this camera. Here is that detailed view:

And indeed looks absolutely like a galaxy, but which one? Andromeda (which is the easiest one to spot)? Let’s annotate the picture so that we have an idea what constellations we’re looking at… Annotation can be done quite easily using the free https://nova.astrometry.net service which only requires you to upload your picture and wait for the processing to finish. No account or login needed. Very neat! So this is what came out:

And as I already suspected that’s the Andromeda galaxy right there, next to the Andromeda constellation! Let’s double check it by comparing our image to what we should have been looking at when we set Stellarium to this moment in time:

Note that the camera output is mirrored compared to the Stellarium output. But when we go into details, the Andromeda galaxy is indeed right where it should be. Super! We also see how the Milky Way is spread across the field of view just like our camera has captured it.

Let’s try another trick. Given I have about 90 minutes of total imaging data, I should also be able to build up a stacked image that visualizes earths rotation through star-trails. So after diving into Siril again the software appears to also have a Maximum Pixel Stacking method which suits the purpose of generating star-trails. So here is what came out:

Again, the output is far beyond what I anticipated when I starting my capturing session. Star-trails are easily visible and show a rotation around the bottom of the screen. Unfortunately each star-trail is a dotted line, and not just one single line. This is probable caused by the latency it takes for the Raspberry Pi to take a single frame. The RPI takes a considerable longer amount of time to capture the image and copy it back to the host system than what the exposure time is set to. The end result could probable be better if we decreased the exposure time, as the RPI needs a lot less time to produce a 3s exposure shot than a 15s exposure shot. The drawback is perhaps that we loose a decent amount of stars that we can track due to the lower sensitivity.

GCAM tricks

Google’s GCam (or Pixel camera app) for Android phones is also able to capture some pretty darn night sky images using image sensors not targeted for astrophotography at all. I’ve always been intrigued by how they succeeded in bringing this to mobile devices, and if I would be able to get to a similar results with my cheap DIY solution, or at least use some of their tricks. It seems that the astrophotography mode in that app is combination of a whole lot of tricks and technology together and not just a single man’s job. A whole team of experts has been working on this mode, but the result it truly astonishing!

So what would we need to get at least somewhere in the good direction with Open Source software?

- camera device

- software that can take multiple shots of the same high exposure. The google team claims to have exposure set up to 16s as this should avoid capturing earth’s rotation

- image processing software for stacking purposes

- google also has ways to detect which part of the picture is the sky, and which isn’t, and use that info in the stacking process. Foreground houses and trees for example should not be stacked. I don’t plan to pursue this machine learning process due to the complexity and lack of processing power.

- darks library. I’m not sure google uses a darks library, but it’s definitely one of the most well known tricks with astrophotography is to enhance image quality. I’ve been playing with it for a bit, but it’s tricky to get it right. Here are some tricks: https://www.cloudynights.com/topic/750233-create-a-library-of-dark-frames/ and https://stargazerslounge.com/topic/389924-building-a-dark-library-generation/. In the end I ditched the idea of using darks and just see whatever comes out.

And actually, when I now look back at some of my result I can indeed notice how the Gcam app is able to reach such extra-ordinary results. Even with my limited knowledge, a cheap camera system and a pair of (good) open-source tools I also succeeded to produce some really nice looking images. Key differences is that off course google throws in some extra ML sauce so that foreground objects are ignored in the stacking process, where in my case things in the foreground tend to get blurred. Also, the computational power of current generation Pixel smartphones goes far beyond what the RPI3 has to offer, so everything works a lot quicker on those devices, and all of the processing is happening on the local device so there is no network latency involved. They also make everything work without any hassle, while for me it takes a bit of time to setup the capturing session (using EKOS this time is reduced to less than a minute though), but a considerable more amount time is spend on image stacking and image quality tweaking.

More automation

EKOS has some simple automations such as the one I demonstrated where we have the ability to schedule the task of creating a batch of pictures. Very handy, as you can define the shutter time, gain, etc for a bunch of pictures that EKOS needs to capture. EKOS will automatically transfer the files to your host PC if needed, and for each new capture a preview will be shown. You can select the output format, but this will mostly be DNG/RAW.

It does however also has its limitations. It can’t do stacking and other image processing tricks. For that I rely on Siril and Gimp. EKOS seems to lack some automation features, we don’t have clear way of commanding EKOS to do some tasks from a higher level application. Say, we have our own application that wants to capture and stack 6 images, so it commands EKOS to do so, and then loads those in a stacking program like Siril. Well, it turns out someone has been exploring the DBUS methods of EKOS, see https://openastronomy.substack.com/p/automating-kstars-and-ekos-pt-2, so that does open for options here for further automation. Siril is also able to automatically perform stacking by watching a directory. For now I’ll leave that territory for what it is as I’m not generating tons of data just yet, and I still feel like I need to explore the software myself manually before I can start thinking of automating things.

Conclusive thoughts

Coming to the end of this article I’m glad that finally I got some positive trade-offs for the time I’ve spend on investigating things in previous articles. The cheap IMX462 sensor combined with a RPI didn’t appear to result in high quality images when I first started looking into a DIY astro setup over more than a year ago. But now I see that it really is possible, though I did had to tackle some things before I got to this point. I don’t have a clue yet what my next goal may be… I’ll think I’ll first take some time capturing night skies before I start exploring new territories.